Completing my Twitter archive

January 17, 2013 at 9:44 PM by Dr. Drang

My complete Twitter archive became available today (yes, I’ve been checking every day), and I just got done processing it to fit in with my existing archive.1 Here’s what I did.

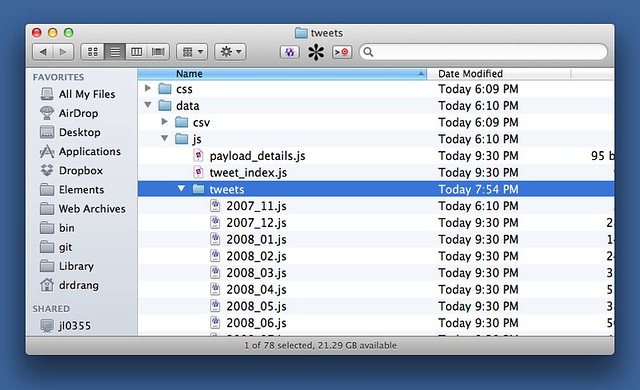

After downloading and unzipping the archive, I had a new tweets directory in my Downloads folder. The tweets themselves are a few levels deep in the tweets directory and are provided in two formats: JSON and CSV. For reasons I hope will become clear soon, I decided to work with the JSON files. They’re kept in several files in a single subdirectory. Each file contains one month’s worth of tweets and the file name is formatted like this: yyyy_mm.js.

The nice thing about this file naming scheme is that it makes alphabetical and chronological order the same, something I exploited when running my script.

My first month of tweets, 2007_11.js looks like this:

Grailbird.data.tweets_2007_11 =

[ {

"source" : "<a href=\"http://twitterrific.com\" rel=\"nofollow\">Twitterrific</a>",

"entities" : {

"user_mentions" : [ ],

"media" : [ ],

"hashtags" : [ ],

"urls" : [ ]

},

"geo" : {

},

"id_str" : "459419062",

"text" : "This NY Times headline is backward: http://xrl.us/bb3xe. Cart before horse, tail wagging dog.",

"id" : 459419062,

"created_at" : "Sat Dec 01 02:52:47 +0000 2007",

"user" : {

"name" : "Dr. Drang",

"screen_name" : "drdrang",

"protected" : false,

"id_str" : "10697232",

"profile_image_url_https" : "https://si0.twimg.com/profile_images/2892920204/2d46631044a7476ad8b355e205ad1e6d_normal.png",

"id" : 10697232,

"verified" : false

}

}, {

"source" : "<a href=\"http://twitterrific.com\" rel=\"nofollow\">Twitterrific</a>",

"entities" : {

"user_mentions" : [ ],

"media" : [ ],

"hashtags" : [ ],

"urls" : [ ]

},

"geo" : {

},

"id_str" : "455042882",

"text" : "Panera's wifi signon page used to crash Safari. Not so with Leopard and Safari 3.",

"id" : 455042882,

"created_at" : "Thu Nov 29 17:14:28 +0000 2007",

"user" : {

"name" : "Dr. Drang",

"screen_name" : "drdrang",

"protected" : false,

"id_str" : "10697232",

"profile_image_url_https" : "https://si0.twimg.com/profile_images/2892920204/2d46631044a7476ad8b355e205ad1e6d_normal.png",

"id" : 10697232,

"verified" : false

}

}, {

"source" : "<a href=\"http://twitterrific.com\" rel=\"nofollow\">Twitterrific</a>",

"entities" : {

"user_mentions" : [ ],

"media" : [ ],

"hashtags" : [ ],

"urls" : [ ]

},

"geo" : {

},

"id_str" : "453535262",

"text" : "Safari Stand now reinstalled on my Leopard machine and all's right with the world.",

"id" : 453535262,

"created_at" : "Thu Nov 29 06:04:55 +0000 2007",

"user" : {

"name" : "Dr. Drang",

"screen_name" : "drdrang",

"protected" : false,

"id_str" : "10697232",

"profile_image_url_https" : "https://si0.twimg.com/profile_images/2892920204/2d46631044a7476ad8b355e205ad1e6d_normal.png",

"id" : 10697232,

"verified" : false

}

} ]

As you can see, it starts with a line that defines a variable. The rest of the file sets the variable to a list of objects. I haven’t looked at this especially carefully, but I assume this whole file is basically just one legal JavaScript assignment statement.

What I see when I look at this file, though, is something that’s damned close to a legal Python assignment. The only problems are

- For the left-hand side of the assignment to be valid, I’d have to create some sort of class structure first.

- Python’s boolean constants are

TrueandFalse, nottrueandfalse.

These are minor problems and we’ll get around both of them.

My goal is to pull out all the tweets in the archive and write them to a single plain text file with the same format as my existing archive. As I write this, the last couple of entries in that archive look like this:

Complained yesterday about not being able to download my Twitter archive. Today it’s available.

January 17, 2013 at 3:35 PM

http://twitter.com/drdrang/status/292022255536439296

- - - - -

@gruber The reason everyone thinks Apple needs new hit products is because that’s how Apple got to where it is now from where it was in ’97.

January 17, 2013 at 4:33 PM

http://twitter.com/drdrang/status/292036995331522562

- - - - -

Each entry goes tweet, date/time, URL, dashed line, blank line.

After a bit of interactive experimenting in IPython (a tool I should have been using long ago), I came up with this script, which I call extract-tweets.py:

python:

1: #!/usr/bin/python

2:

3: from datetime import datetime

4: import pytz

5: import sys

6:

7: # Universal convenience variables

8: utc = pytz.utc

9: instrp = '%a %b %d %H:%M:%S +0000 %Y'

10: false = False

11: true = True

12:

13: # Convenience variables specific to me

14: homeTZ = pytz.timezone('US/Central')

15: urlprefix = 'http://twitter.com/drdrang/status/'

16: outstrf = '%B %-d, %Y at %-I:%M %p'

17:

18: # The list of JSON files to process is assumed to be given as

19: # the arguments to this script. They are also expected to be in

20: # chronological order. This is the way they come when the

21: # Twitter archive is unzipped.

22: for m in sys.argv[1:]:

23: f = open(m)

24: f.readline() # discard the first line

25: tweets = eval(f.read())

26: tweets.reverse() # we want them in chronological order

27: for t in tweets:

28: text = t['text']

29: url = urlprefix + t['id_str']

30: dt = utc.localize(datetime.strptime(t['created_at'], instrp))

31: dt = dt.astimezone(homeTZ)

32: date = dt.strftime(outstrf)

33: print '''%s

34: %s

35: %s

36: - - - - -

37: ''' % (text, date, url)

In Lines 10 and 11, we defined two variables, true and false to have the Python values True and False. This turns all the trues and falses in the JSON into legal Python. On Line 24, you see that after opening a file, we read the first line but don’t do anything with it. The purpose of this line is to move the file’s position marker to the start of the next line, so that the read call in Line 25 doesn’t include the first line. Everything sucked up by that read is legal Python and can be eval’d to create a list of dictionaries that are assigned to the variable tweets.

With this bit of trickery out of the way, the rest of the script is pretty straightforward. There’s some messing about with timezones because I want my timestamps to reflect my timezone, not UTC, which is what Twitter saves. The conversion is done through the pytz library and follows the same general outline I used in an earlier archiving script.

The guts of the script is a loop that opens and processes each file given on the command line. With the script saved in the same directory as the JSON files, I ran

python extract-tweets.py *.js > full-twitter.txt

to get all my tweets in one file with the format described above.

As it happens, the Twitter archive I downloaded this evening didn’t include any of today’s tweets. So I copied today’s tweets from the archive I keep in Dropbox, which gets updated every hour, pasted them onto the end of full-twitter.txt, and saved it in Dropbox to be regularly updated from now on.

According to the archive, this was my first tweet:

Safari Stand now reinstalled on my Leopard machine and all’s right with the world.

— Dr. Drang (@drdrang) Thu Nov 29 2007 12:04 AM CST

Remember Safari Stand? Those were the days.

-

Why did I need to download Twitter’s version of my archive when I already had one of my own? Because of Twitter’s limitation on how many tweets you can retrieve through the API, my archive was missing my first thousand or so tweets. Now I have them and can sleep peacefully again. ↩