Simply supported beam—Fourier series solution of the ODE

June 6, 2026 at 3:36 PM by Dr. Drang

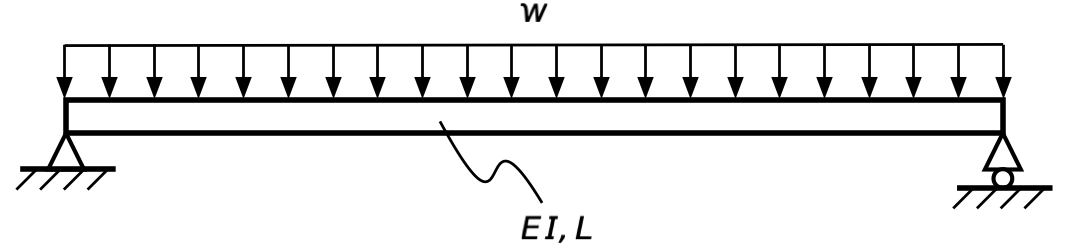

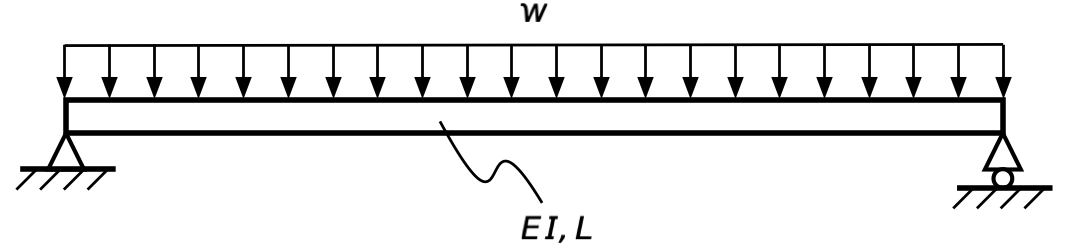

We’re finally here, at the end of all things. In this post, we’ll use a Fourier series to get the formula for the center deflection of a simply supported beam with a uniformly distributed load. We’ll see some of the same math that we saw in the previous Fourier series solution, but the fundamental approach will be different.

Let’s start with the fourth-order ordinary differential equation for a beam with a general loading function, :

The simple supports at both ends give us these boundary conditions:

Let’s work out the solution for a loading condition we haven’t considered before, a distributed load in the form of a sine wave:

where is a positive integer and is some constant amplitude. Given the form of the governing ODE and the boundary conditions, it seems likely that the solution will look like this:

It satisfies the boundary conditions, and if we plug it and the expression for q into the governing ODE, we get

which is a solution for all values of x if

This will work for any value of m that’s a positive integer.

Since the governing ODE is linear, if q is the sum of several sine terms, the solution will be the sum of several sine terms. Returning to our favorite problem,

we now have a path for expressing the solution as a sum of sines, as long as we can express the constant function, , as a sum of sines.

And of course we can. The Fourier sine series expression that fits a constant is

where

This result comes from the orthogonality of the sine function. The Fourier coefficients are the same as those for a square wave.

Plugging this result into the expression for , we get

for odd m. Therefore,

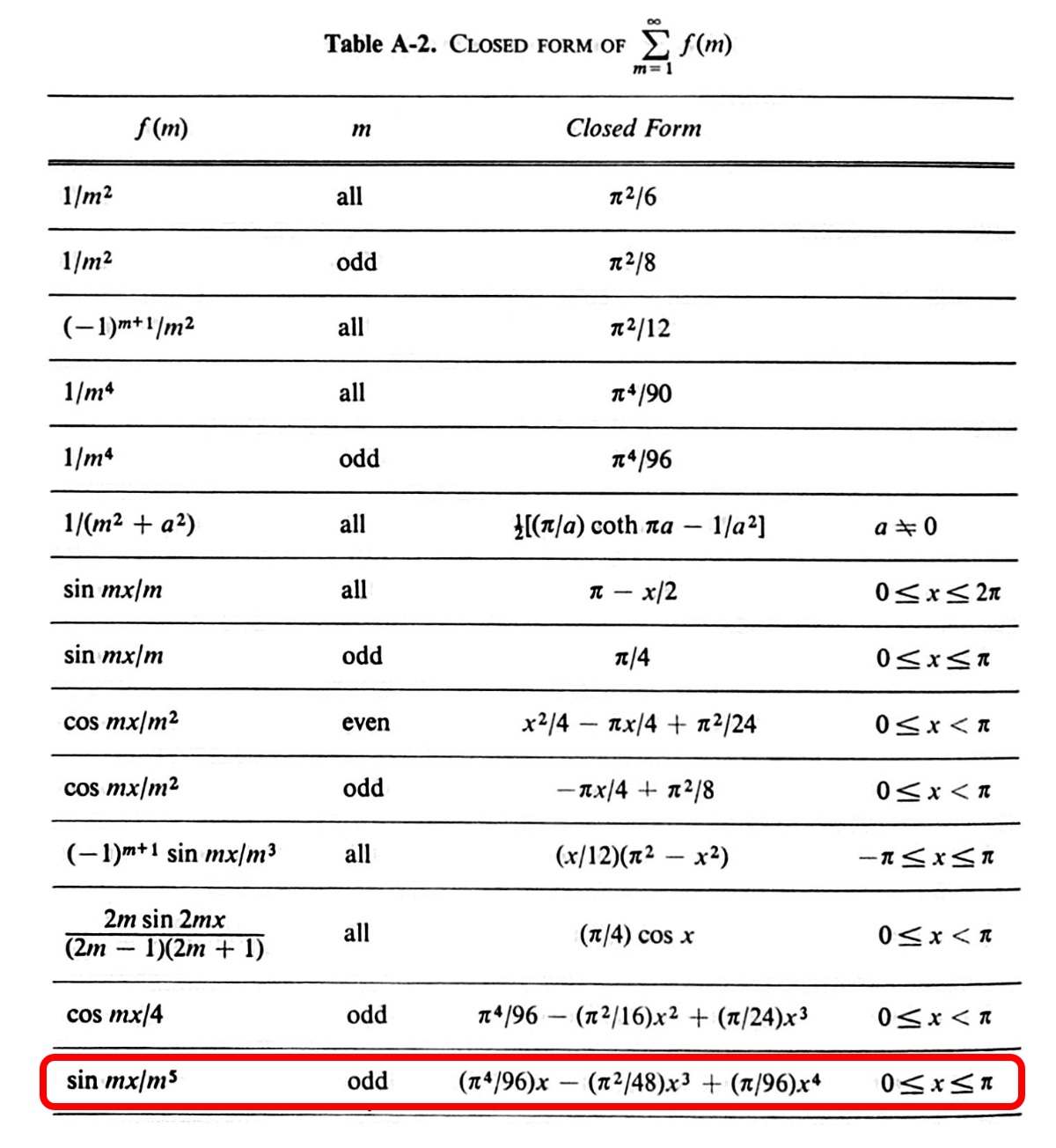

You may recall from our earlier post that there’s a closed-form solution for this sum:

The last entry in this table with gives us

Substituting the closed-form solution in for the sum yields our old friend:

The purpose of this series of posts—apart from a bit of showing off—was to demonstrate why I love structural analysis. It has, even in the simplest of problems, a depth that rewards those who study it. And when you’re confronted with problems that aren’t so simple, you can draw on that depth to understand and solve them.

Simply supported beam—Newmark’s method

June 6, 2026 at 7:44 AM by Dr. Drang

This would have been the last post in the series if I hadn’t realized recently that another method deserved a post. So this is the penultimate. It was described in this 1943 paper by Nathan Newmark and is known—or was known—as Newmark’s method.

I say was because this method is dead. How dead? Forty-five years ago, when I was an undergraduate taking structural analysis courses, Newmark’s method wasn’t being taught in the department that he had been the head of for decades and whose main building is named after him. That’s dead.

Which isn’t to say it wasn’t a good method. Some of my professors talked about using it when they were young, and it was a practical technique when engineers used slide rules and desk calculators, but it was clear by the 80s that its time had passed. Still, it’s desncribed in the textbook I used as an undergrad, and I did eventually learn it well after I was out of school, so here it is.

Newmark’s method is basically a way of doing double integration, so you can use it to go from loading to bending moments or from bending moments to deflection. As Newmark says in the introduction to his paper,

The numerical procedure is approximate, but leads to exact moments (or deflections) when the loading diagram (or diagram of “angle changes”) is made up of segments that are bounded by straight lines or by arcs of parabolas.

For our simply supported, uniformly loaded beam, the “angle change” diagram—which we’ve been calling a curvature diagram—is a parabola, so we can get an exact solution.

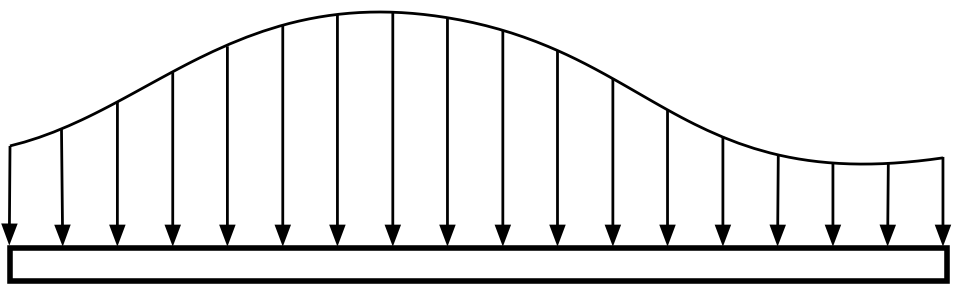

Let’s say we have a beam with any kind of support conditions and some general distributed loading:

The fundamental trick of Newmark’s method is to imagine a set of simply supported beams inserted between the load and the real beam.

The small imaginary beam segments are all of the same length, , and the analysis proceeds by calculating the reaction forces at the ends of these beams and then building a table of loads, shears, and moments based on these reactions (reversed in direction) acting on the large original beam.

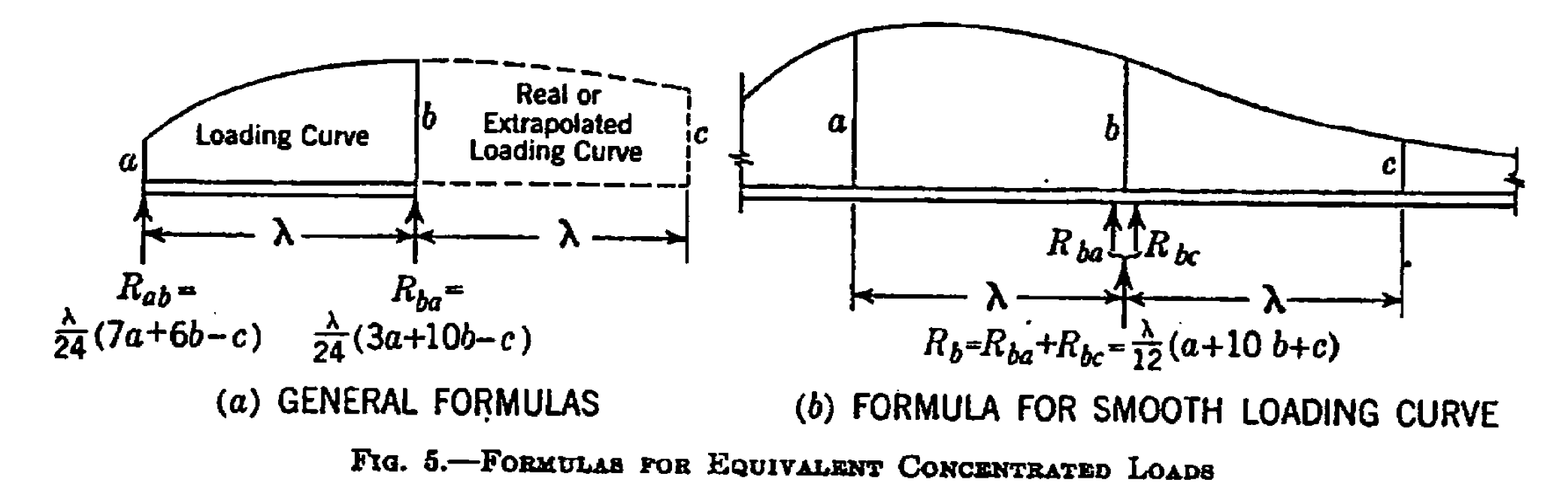

Here’s a figure from the paper that explains how to calculate the reaction forces for parabolically distributed loading:

There are simpler formulas if the loading is distributed linearly.

You may have noticed the double curvature in the right drawing, which means that the load shown there is not parabolic. Newmark suggests using the formulas in this figure even if the loading curve is of higher order—the parabolic formulas are close enough if you divide the beam into several segments.

I’ve focused here on the process of going from loads to bending moments because I think the mechanics of that is easier to understand. But the same process applies to going from curvature to deflection. We went through that in the conjugate beam post.

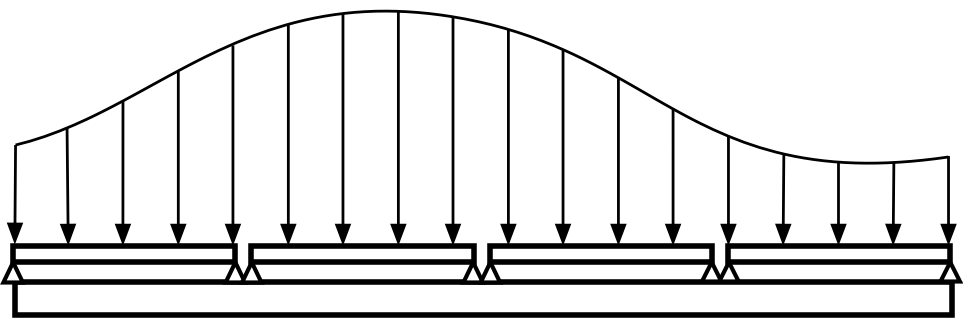

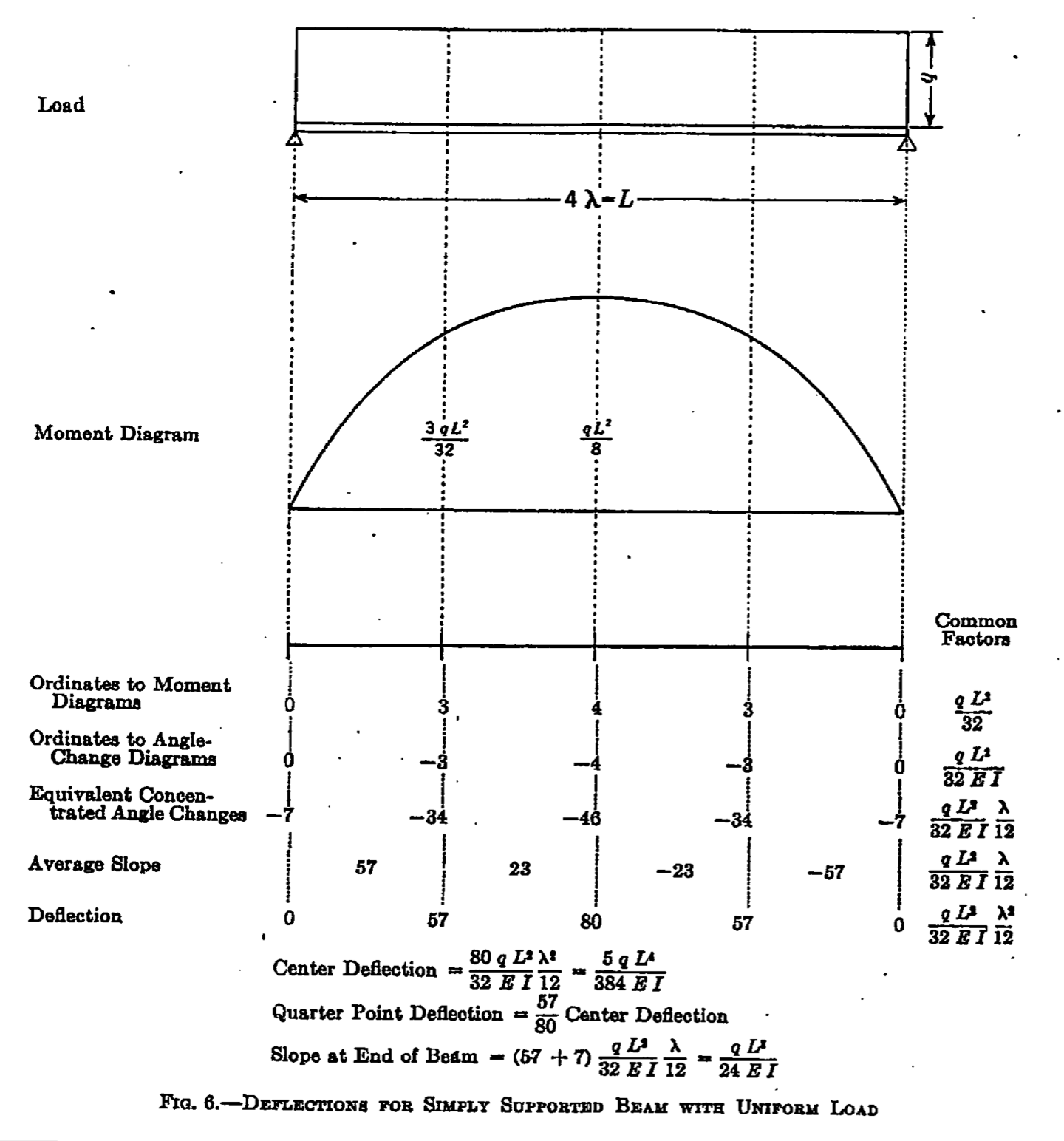

In fact, Newmark does our problem in the paper. He uses the constant q as the intensity of the load and splits the beam into four segments:

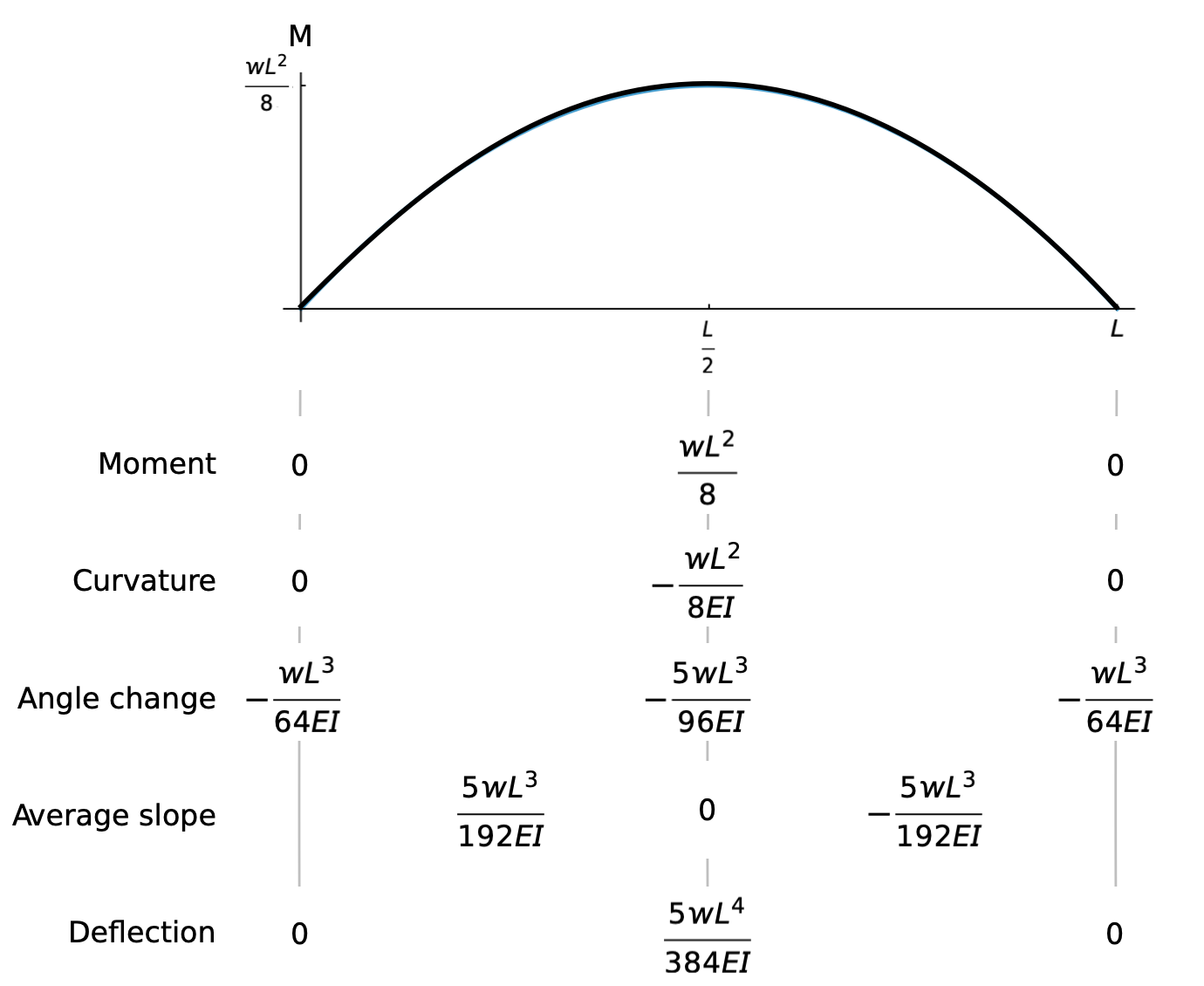

This may look impenetrable, but we can simplify it by using only two segments and explaining each step. Here’s the table, starting with the moment diagram:

The row labeled Moment is the value of the bending moment at each point. The Curvature row is just the moment at each point divided by EI and with the sign reversed. Those are the easy ones.

The Angle change row is based on the curvature values and the formulas in Figure 5 above. The value at the left end is

where we’ve used . This is also the value at the right end.

The value at the center is

We start the Average slope row with the knowledge that the slope at the center of the beam is zero, so if the angle change across the center is

then the average slope must go from

as we move from the left half of the beam to the right half.

(If you’re having trouble with this, remember that angle change is analogous to shear and think of a beam with an antisymmetric shear diagram. If the change in shear across the centerline is , then the shear must be in the left half of the beam and in the right half.)

For the Deflection row, we know that the deflection is zero at the left and right ends. Since the average slope in the left half of the beam is

the deflection at the center must be

an answer we’ve now seen twelve times.

Simply supported beam—dummy unit load method

June 5, 2026 at 7:51 AM by Dr. Drang

We’re in the home stretch of this series, so ANIAT will soon go back to complaining about Apple’s UI choices.1 Next week’s WWDC keynote should provide some inspiration.

But today’s post covers our eleventh method for deriving the center deflection of a uniformly loaded simply supported beam: the dummy unit load method. When I was an undergraduate, this was the method we used to determine the deflections of truss structures, but it’s more general than that. My favorite explanation of why it works is in Nicholas J. Hoff’s The Analysis of Structures. I have the original Wiley hardcover of this book, but you can apparently get both paperback and hardcover reprints.

Hoff uses the principle of virtual work in his explanation, and I’d like to quote him here, but unfortunately his explanation is split between one section on the analysis of trusses and another on the analysis of beams. I couldn’t figure out a nice way to put them together coherently, so you’re just going to have to trust me.

For a beam, you can get the deflection at a particular point by following these steps:

- Work out an equation for the bending moment, M, in the beam for the given set of applied loads.

- Imagine that same beam with those loads removed (this is the dummy structure) and a concentrated unit load placed at the point at which we want the deflection. By definition, the magnitude of a unit load is one. The unit load points in the direction of the deflection we want to calculate.

- Work out an equation for the bending moment, m, in the dummy beam with the unit load.

The desired deflection is

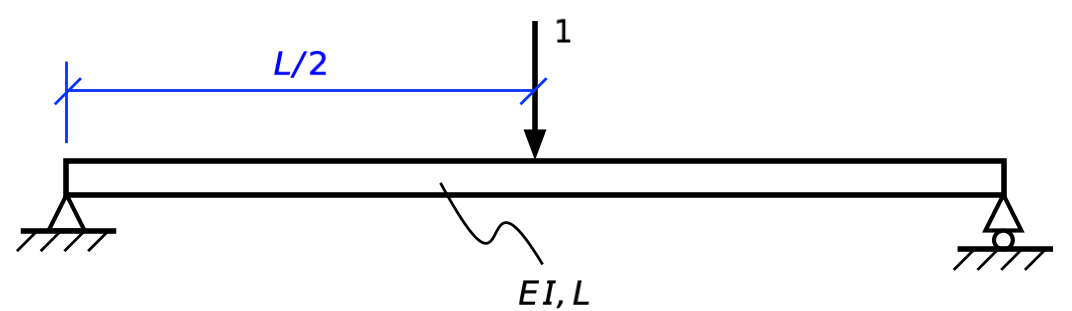

Let’s apply this simple principle to our problem:

The bending moment is

where the x coordinate starts at the left end of the beam and increases to the right, as usual.

This same structure with just a unit load at the center is

Its bending moment is

and m is symmetric about the center of the beam.

Therefore,

and we can take advantage of symmetry to say

You can pretty much do this problem in your head if you’re good at figuring out least common multiples. I’m not, but after writing things out, I did realize that the LCM of and is .

-

Heads up, though. I recently thought of a thirteenth method that’s different enough from the others to merit another post. Sorry about that. ↩

Simply supported beam—finite element method

June 3, 2026 at 8:24 AM by Dr. Drang

The biggest problem I face when writing posts in this series is deciding how much background explanation to put in. If I included only the stuff I do to get the answer, the posts would be very terse: one sketch and a dozen or fewer lines of math. A full explanation, though, could easily turn into several semesters’ worth of structural analysis theory. I’m trying to get close to the former while adding just enough background so readers with engineering experience can follow along. Even if you haven’t used the method of a particular post, you should be able to take what’s in the post and find the whatever further information you need to fill in the inevitable gaps.

This problem is most acute in describing today’s analysis. The finite element method—more precisely called matrix structural analysis or the direct stiffness method when applied to structures made of beam, truss, or frame elements—has many analytical parents: the Rayleigh-Ritz method, the slope-deflection equation, and matrix math. Presenting it without at least some of its antecedents would be a crime. But I will try to keep the introductory material to a minimum.

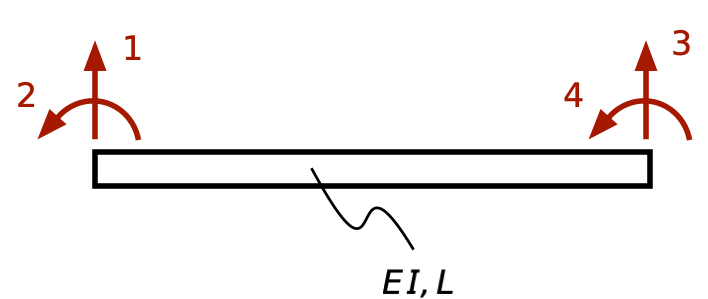

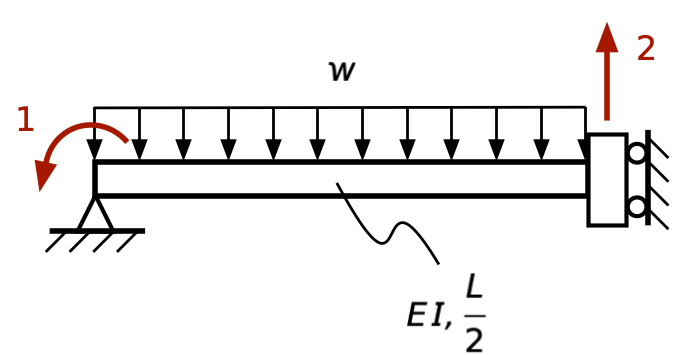

The fundamental piece of this analysis is the beam bending element. This is a length of beam with no interior loading, and its behavior can be described completely by four parameters, called its degrees of freedom. The DOF are the the displacements and rotations at the two ends (or nodes) of the element, usually numbered and directed as follows:

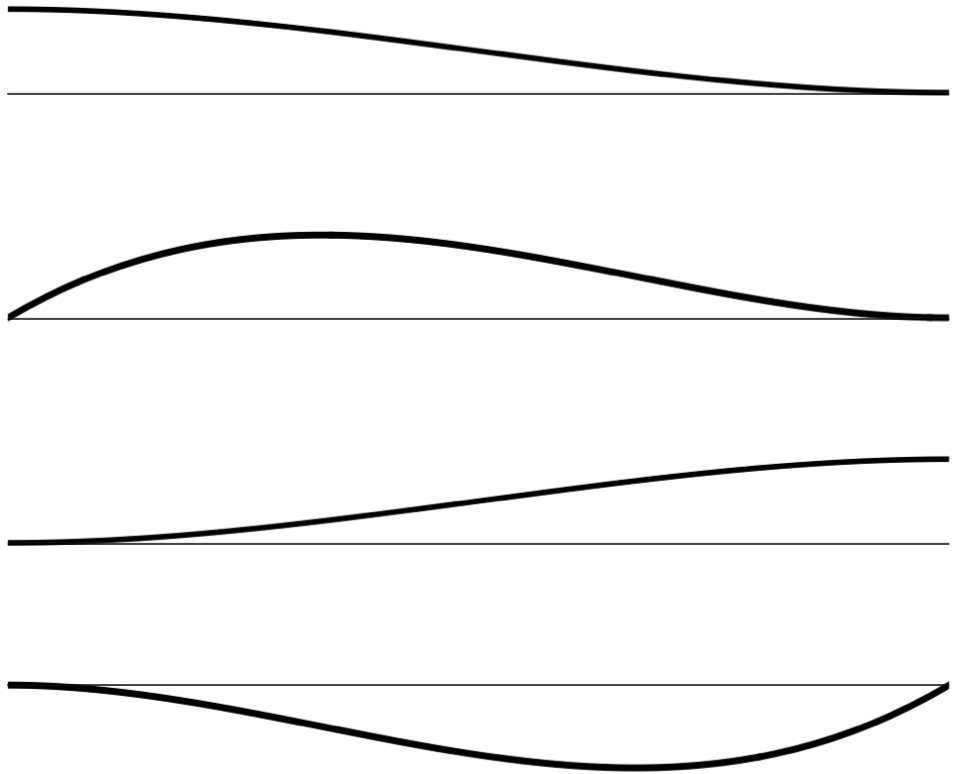

The DOF are often called generalized displacements, and we’ll refer to them as , , , and . The deflected shape of this beam is completely determined by the sum of the products of these generalized displacements and a set of shape functions that look like this:

Each shape function is a cubic curve that has a unit displacement corresponding to one of the DOFs and zero displacement corresponding to the other three DOFs. Apart from the sign convention, these are the same shapes we saw in our discussion of the slope-deflection equation.

There is a generalized force associated with each DOF; these are the forces and moments at the two ends of the beam, and we’ll call them , , , and . Note that when we multiply a u term by an f term, we get an energy expression. For DOF 1 and 3, that’s a displacement multiplied by a force; for DOF 2 and 4, that’s a rotation multiplied by a moment.

For this element, we can write a matrix equation for the relationship between the generalized displacements and generalized forces,

where

and

Each column is the set of generalized forces that correspond to that shape function.

You may notice that the terms in the second row of match the coefficients in the slope-deflection equation before the and terms were combined into the span rotation term.

What should we do when, as in our case of a beam with a distributed load, there is interior loading? We convert that internal loading into what are called consistent loads at the ends. These are equal in magnitude and opposite in direction to the reaction forces and moments that would occur if the interior loads were applied to a beam with both ends fixed: for DOF 1 and 3 and for DOF 2 and 4.

To solve a problem by the finite element method, we assemble the structural stiffness matrix, , and the structural force vector, from all the individual elements and then solve the structural equation,

for the vector of structural displacements, . As you can see, I tend to use lower-case letters for the element terms and upper-case for the structural terms. That’s a reasonably common, but by no means universal, notation.

In general, this assembly and solution of a matrix equation can be quite complex, which is why the finite element method is typically thought of as a process that requires a computer. But our problem is simple enough to do by hand.

Recall that in both the slope-defection and Myosotis solutions, we took advantage of symmetry and considered just half the beam. We’ll do that again here.

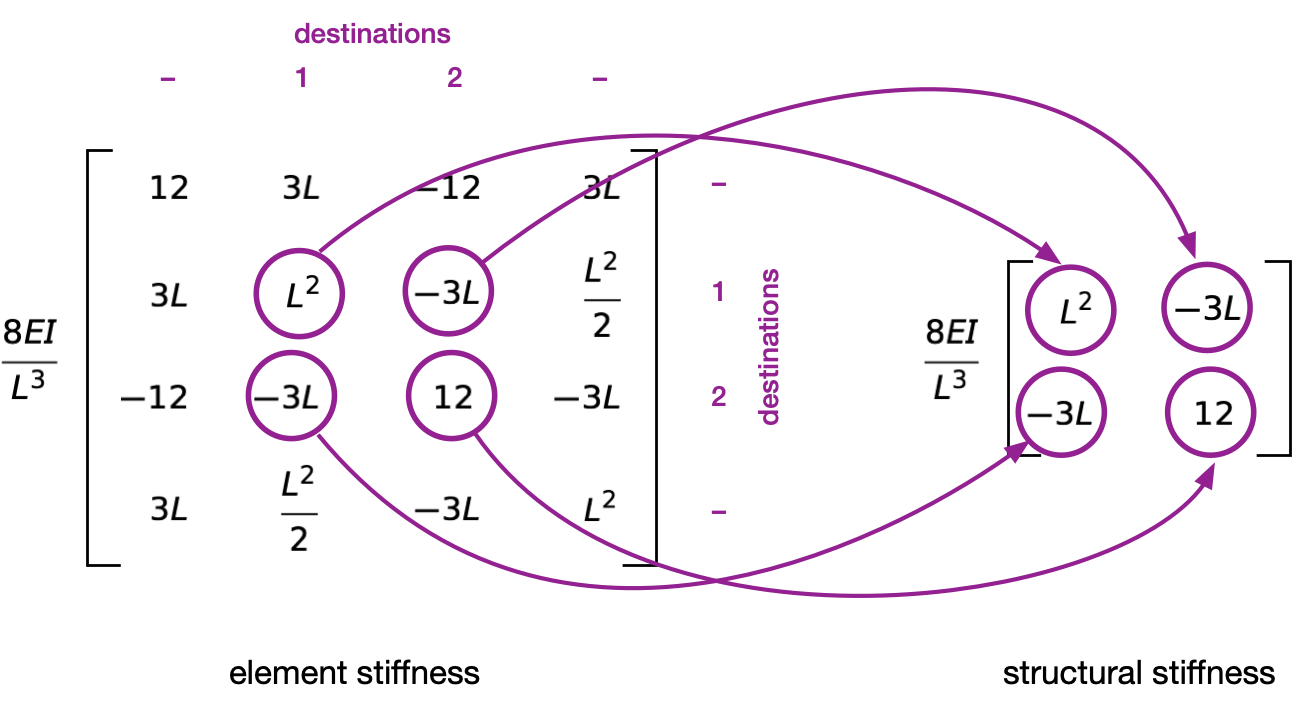

This half-beam has just two DOF, the rotation at the left end and the deflection at the right end. In the assembly process, element DOF 2 matches structural DOF 1 and element DOF 3 matches structural DOF 2. The other element DOF don’t appear in the structural matrix equation because their U terms are zero. Here’s an illustration of the assembly process for the stiffness matrix, accounting for the fact that the beam element has a length of :

I took a class in matrix structural analysis (CE 361) from Prof. Jamshid Ghaboussi in the fall of 1981. He used the phrase destination array for the list of structural DOF that the element DOF go to. I’ve always thought that was a particularly good description and have used it ever since, even though I don’t think I’ve ever seen it in any finite element textbook. Note again that DOF 1 and 4 in the element have no destination because those displacements are zero in the structure.

When the assembly of both the stiffness matrix and force vector (which is done in a similar way) are complete, we get this equation:

There are different ways to solve this, but because a 2×2 matrix is easy to invert, that’s how I did it. Premultiplying each side by the inverse of gives us

Matrix multiplication gives us

The signs are the opposite of what we’ve seen before because of the directions we used in defining the degrees of freedom. Physically, though, these are the same answers we’ve seen several times.