Simply supported beam—energy minimization with Fourier series

May 28, 2026 at 12:22 PM by Dr. Drang

Continuing our trip through various methods to derive the equation for the center deflection of a uniformly loaded simply supported beam, today we’re going to do the first of two solutions using the Rayleigh-Ritz method.

Of all the possible shapes a beam can deform into, the shape it will deform into is the one that minimizes the potential energy of the system, the system being the beam and the load. The equation for the potential energy for our beam, , is

where the first term comes from the bending of the beam (note its similarity to the formula for a spring) and the second term comes from the load acting through the deflection. The first term is positive because the potential energy of the beam increases as the beam bends; the second term is negative because the potential energy of the uniform load decreases as the load moves down with the beam.

Minimizing an expression like this with respect to the displacement function, y, is what the calculus of variations was invented to do. But Lord Rayleigh and Walther Ritz came up with a way to avoid the calculus of variations. Instead of considering all possible shapes for y, we can consider only certain shapes governed by a set of associated parameters. We then express the potential energy in terms of these parameters and solve for the parameter values that minimize it.

Let’s demonstrate with a simple example. We’ll assume y is of this form,

and find the value of a that minimizes

This sine function is a good choice because it meets all the boundary conditions of the simply supported beam: both it and its second derivative are zero at the two ends of the beam, i.e.,

(Recall that the moment is proportional to the second derivative of the displacement—since the moment is zero at a simply supported end, so is the second derivative.)

Given our choice for y, we can say that

Therefore,

The first integral works out to be and the second to . So

To find the value of a that minimizes this, we take its first derivative with respect to a and set it equal to zero:

which means

and

Compare this with our previous solution,

and we see that the one-term Rayleigh-Ritz approximation is awfully close to the exact solution.

But our goal wasn’t to get awfully close; it was to get the exact solution. To do that, we need to take not a single sine term, but the sum of an infinite number of sine terms, like this:

This is called a Fourier series, and you may recall seeing somewhere that a Fourier series can be fit to any function. In general, a Fourier series will have both sine and cosine terms, but for our problem the cosine terms drop out to meet the boundary conditions.

It may seem that we’ve just assigned ourselves an infinite amount of work, given that our expression for potential energy is now

But there are some features of the sine function that we can take advantage of. First and foremost, that nasty integral in the first term is actually quite simple:

And the second term can be simplified, too:

So we end up with

We minimize with respect to the by setting

for all m. Solving for we get

Update 28 May 2026 2:39 PM

I forgot to mention here that when I first scratched out this solution in my notebook, I knew that the would be zero for even m because of symmetry and never included them in the expression for y. Here, I decided to include the even values and show that they drop out as a natural consequence of the minimization process.

Plugging these results into our series expression for y and evaluating it at gives us

The sine term inside the sum alternates between 1 and –1, so we could write this as

At this point, I could get the sum from Mathematica with this expression,

Sum[(-1)^((m - 1)/2)/m^5, {m, 1, Infinity, 2}]

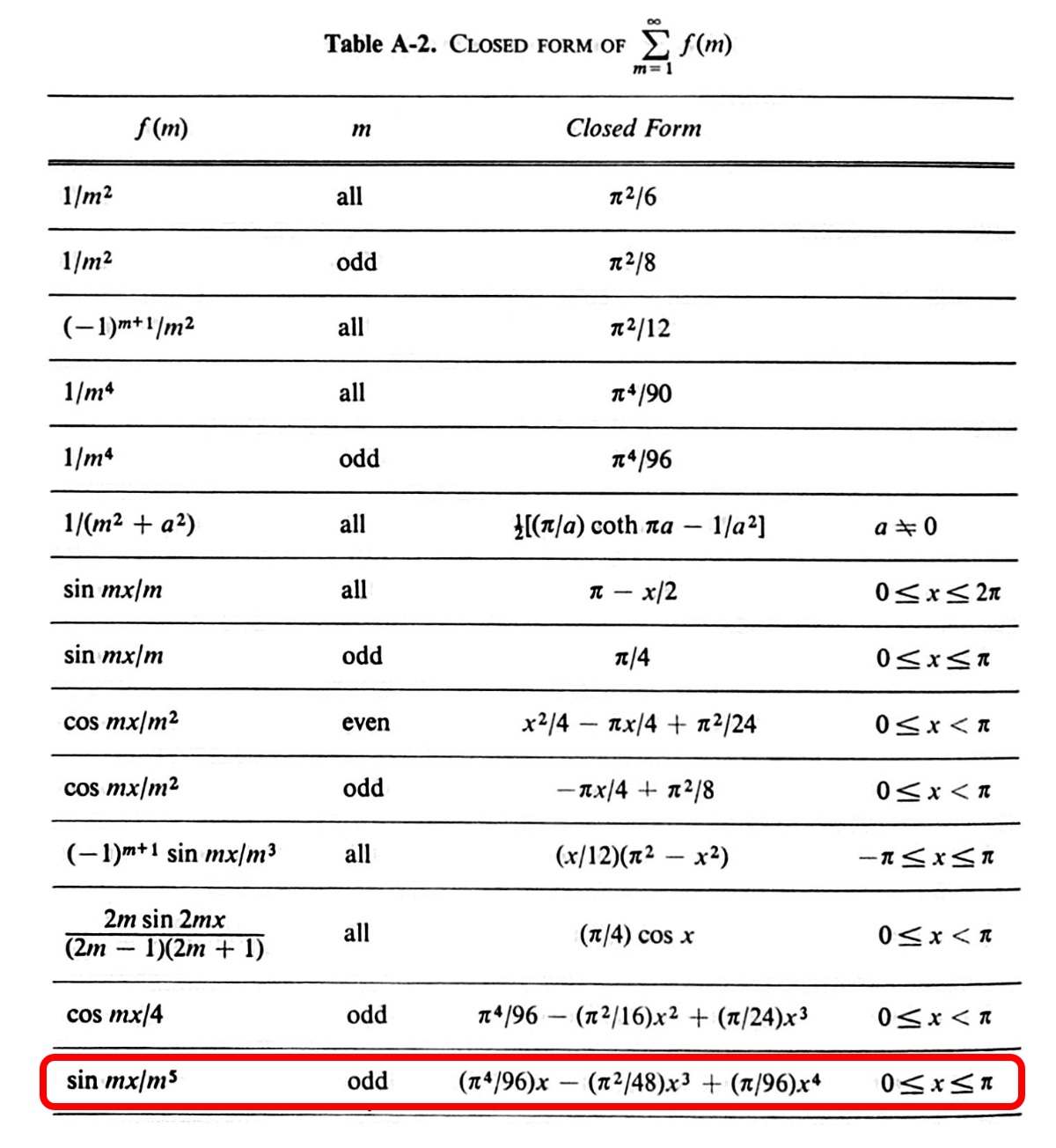

but that would be breaking my self-imposed rule against using computers in the derivation. Luckily, I have a book, An Introduction to the Elastic Stability of Structures by George Simitses, that discusses using infinite series in Rayleigh-Ritz solutions, and it includes this table of closed form solutions for infinite sums:

We can use the last entry in this table with to get

And through the magic of cancellation,

I’m not suggesting this is the best way to derive this formula, but it’s nice to know you can do it. And when you don’t need an exact answer, the Rayleigh-Ritz method can give you a good approximation without much work.

Simply supported beam—the Myosotis method

May 26, 2026 at 8:28 AM by Dr. Drang

The sixth way we’ll derive the formula for the center deflection of a uniformly loaded simply supported beam is the Myosotis method, which I wrote about over a decade ago. This is the method popularized1 by J.P. Den Hartog in his Strength of Materials textbook.

Image from Wikipedia.

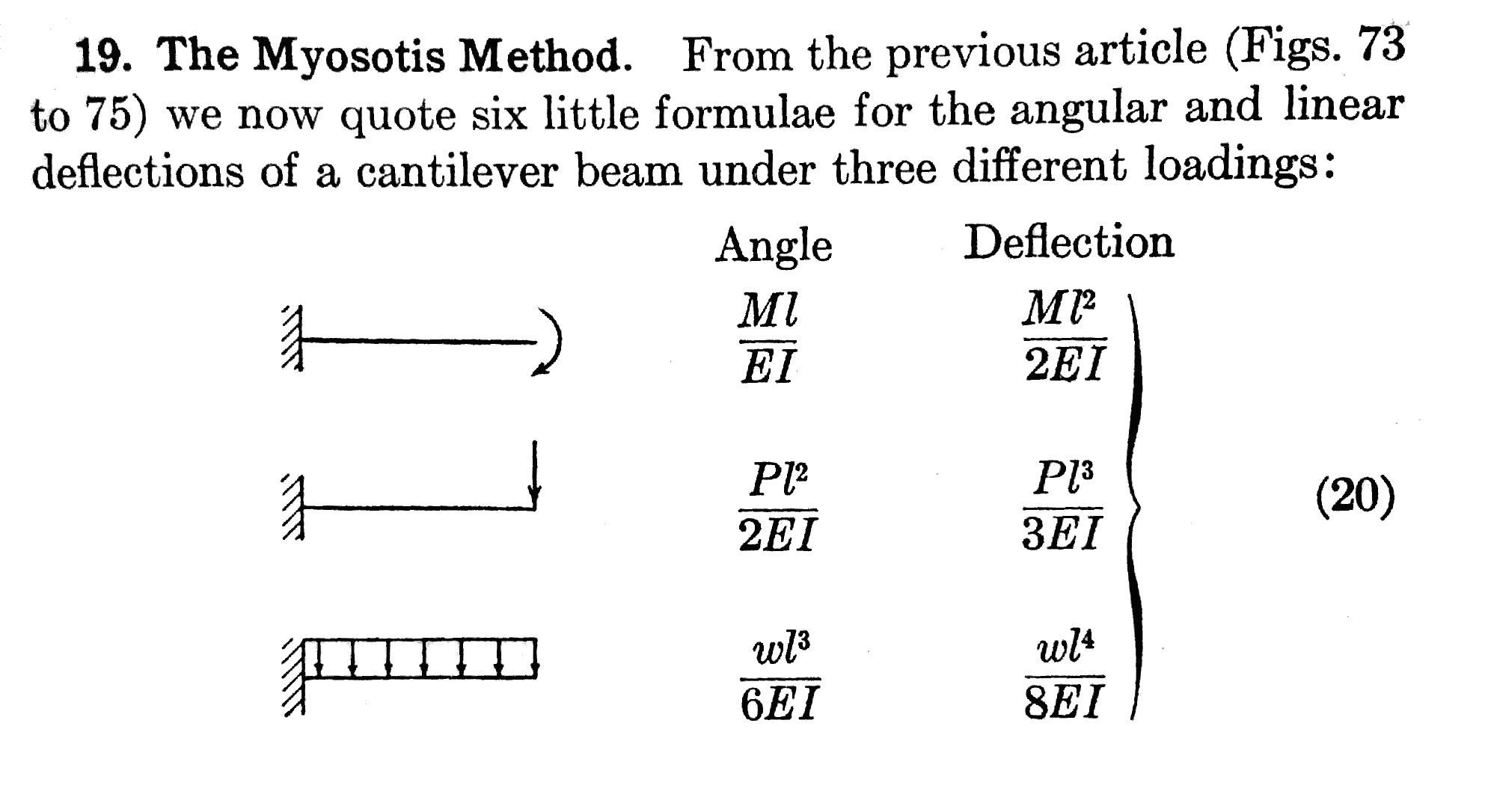

Myosotis is the genus of the forget-me-not flower, and the idea behind the Myosotis method is to memorize the following six equations for the tip angle and deflection of a cantilever beam under different loading conditions.

Once you have the formulas memorized, you can combine them to generate the solution for almost any beam that’s subjected to point and uniformly distributed loads. I wouldn’t say the Myosotis method is, or has ever been, a practical tool for working engineers, but it’s a great pedagogical tool for teaching engineering students how to take advantage of symmetry, antisymmetry, and superposition. Using it even a few times will get you thinking about how complex structural problems can be broken down into a combination of simpler solutions, and that will stay with you even if you never use the Myosotis method again.

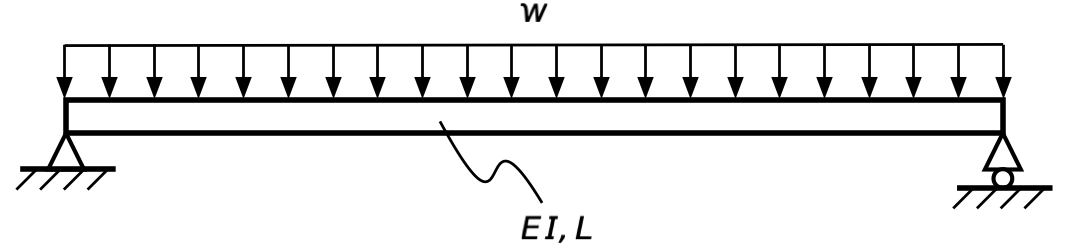

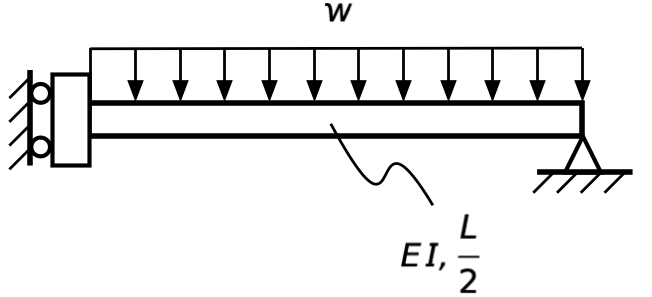

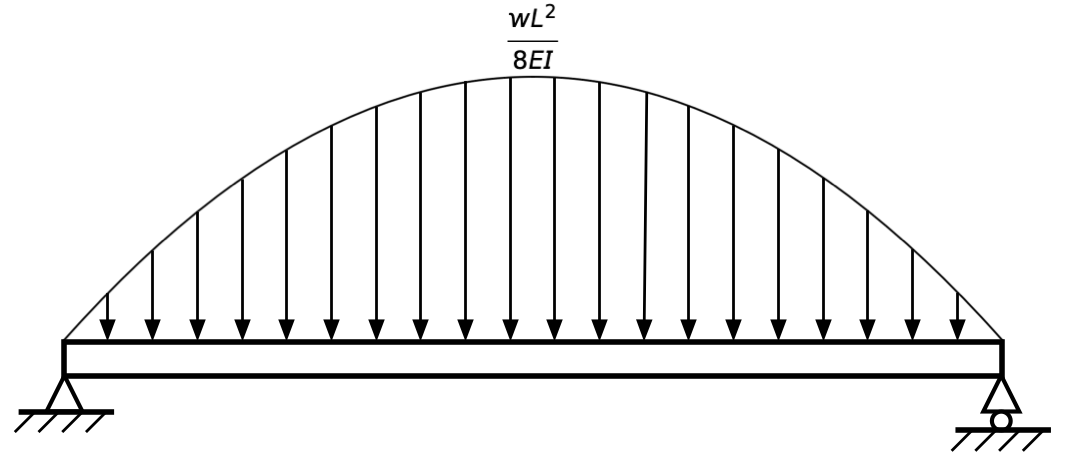

We mentioned in the slope-deflection post that the left half of our simply supported beam behaves like a simple-guided beam. Let’s be more explicit about that. The symmetry of the problem we want to solve,

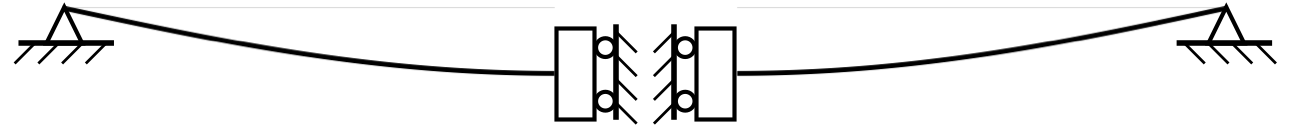

means it deflects like two simple-guided beams back to back:

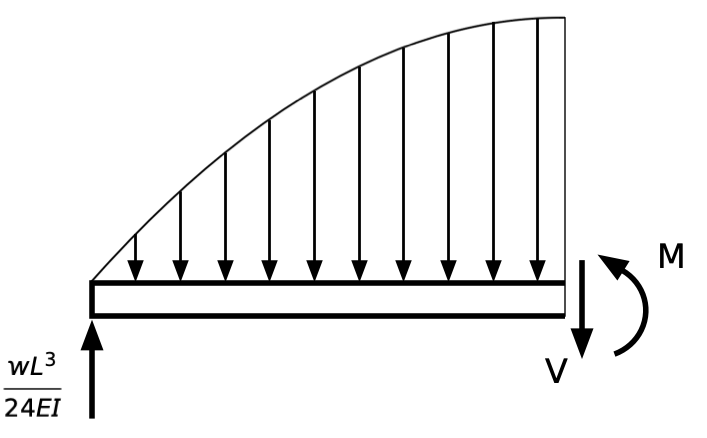

This time, we’ll consider the right half:

Statics tells us that the upward reaction at the right support is .

This is, apart from an overall downward displacement, the same as a fixed-free beam with both a uniform load over its length and an upward load at its tip:

So the downward deflection at the center of our full-length simple-simple beam is equal to the left end deflection of our half-length guided-simple beam, which in turn is equal to the upward right end deflection of our half-length fixed-free beam. One of the purposes of a structural engineering education is to get you to see these relationships in a lot less time than it takes to type them out.

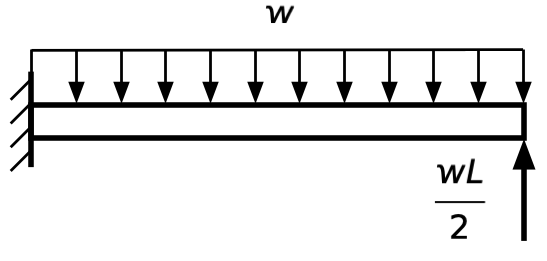

Now we can use superposition and two of the Myosotis formulas to get our answer. Here’s a graphical expression of how the superposition works:

So the upward deflection of the right end of the fixed-free beam is

and that’s the same as the downward center deflection of our original problem, as expected.

Simply supported beam—slope-deflection equation

May 23, 2026 at 7:51 AM by Dr. Drang

The next technique we’ll use to derive the formula for the center deflection of a simply supported beam with a uniform load is the slope-deflection equation:

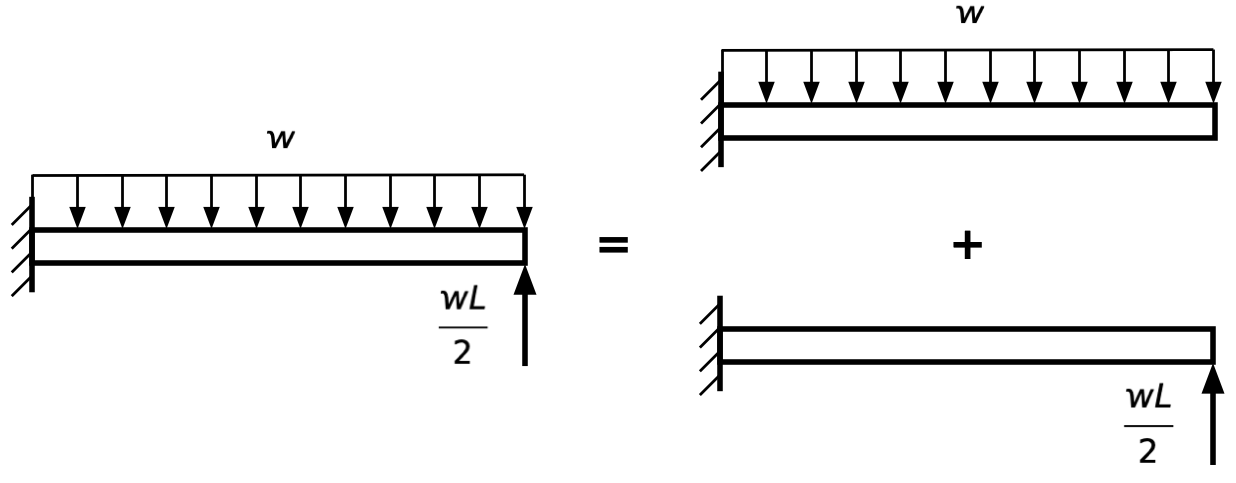

Let’s start by explaining where all the terms come from. Here’s a beam of length L with arbitrary end supports (could be simple, fixed, free, or sprung) and an arbitrary applied load. We’ll call the left end A and the right end B.

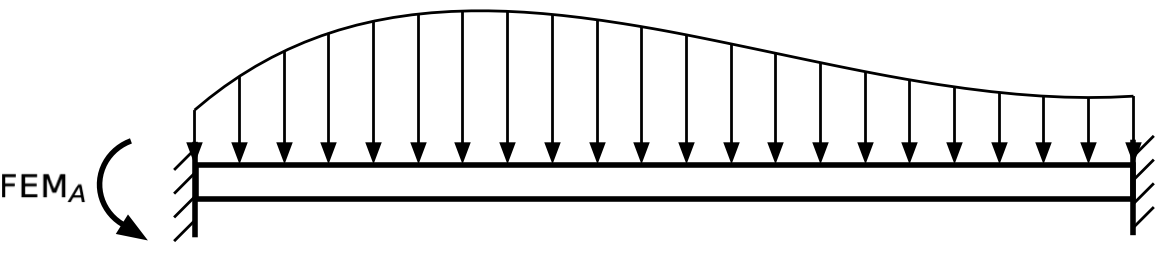

The moment at A is the sum of five terms, which come from the superposition1 of five conditions. First is the fixed-end moment (FEM), which is the moment that would exist at A if the beam had both ends fixed against vertical displacement and rotation:

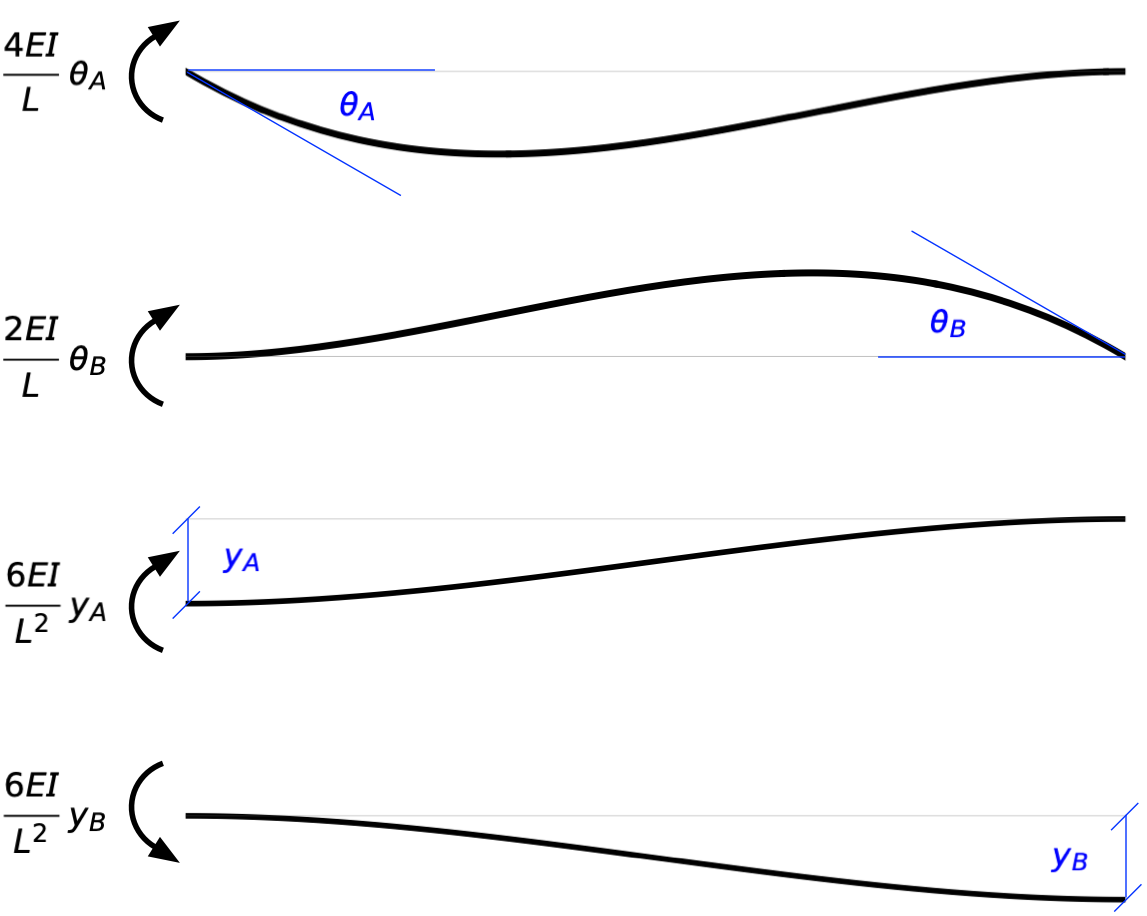

The other four terms come from analysis of the unloaded beam when specific geometric end conditions are applied. The end conditions are specified by the clockwise rotation at each end, , and and the downward defection at each end, and .

Each of these shapes comes from applying just one of these end conditions and keeping the others zero. The moment at A that corresponds to each of these shapes is given in the figure.

The general solution for the clockwise moment at A is the sum of these five terms:

Note that we’ve put in some negative signs to account for the counter-clockwise terms.

Let’s now define the span rotation as

This is the clockwise rotation of the straight line connecting points A and B.

Rewriting the third and fourth terms on the right-hand side of the equation using this definition, we get

Pulling out common terms gives us the equation at the top of the post:2

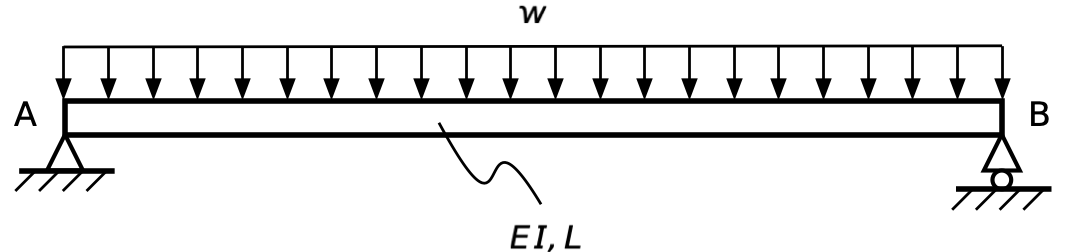

OK, let’s use this to solve our problem. We’ll start with our simply supported beam and label the two ends:

The simple supports mean and (the straight line connecting A and B stays horizontal through the deflection). Symmetry tells us . And the fixed-end moment for a uniform load is (this is another one of those things burned into my brain through repetition).

So

and therefore

which should look familiar.

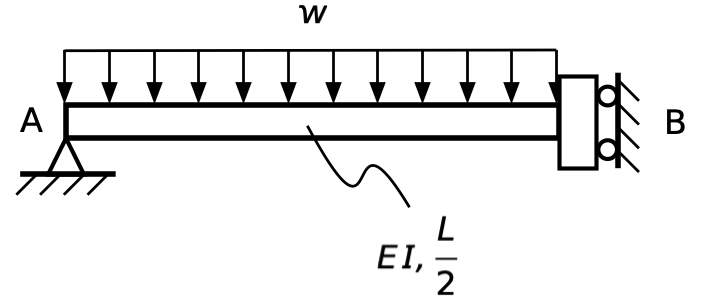

To get the center deflection, we need to use symmetry in another way. It tells us that the slope at the center of the beam is zero, which means we can treat the left half of the beam as its own problem:

The right end of this half-length beam is guided, which means it’s free to deflect but prevented from rotating. This beam will behave exactly like the left half of our original beam.

For this half-length beam, we know that

where we’ve taken the expression for from the intermediate solution above. Plugging these into the slope-deflection equation gives us

And therefore, as we’ve seen five times now,

We had to solve two equations to get this result, but they weren’t simultaneous equations, so it wasn’t that much work.

-

There are other ways to explain the slope-deflection equation. I decided to explain it using superposition after getting this Mastodon reply from Chris Huck. ↩

-

Most texts define the FEM as positive in the clockwise direction, so it has a positive sign in the slope-deflection equation. Since the FEM at the left end of a beam under most loading conditions is counter-clockwise, I prefer to define it that way and use a negative sign in the equation. ↩

Simply supported beam—conjugate beam method

May 22, 2026 at 8:01 AM by Dr. Drang

The fourth way we’re going to derive the formula for the center deflection of a simply supported beam with a uniform load is the conjugate beam method. This is probably tied with the moment-area method for the simplest and fastest way to get the formula—at least if you’ve memorized the properties of parabolas.

I wrote about the conjugate beam method last year. In a nutshell, it takes advantage of the similarity of the relationships between bending moment and distributed load,

and between displacement and bending moment,

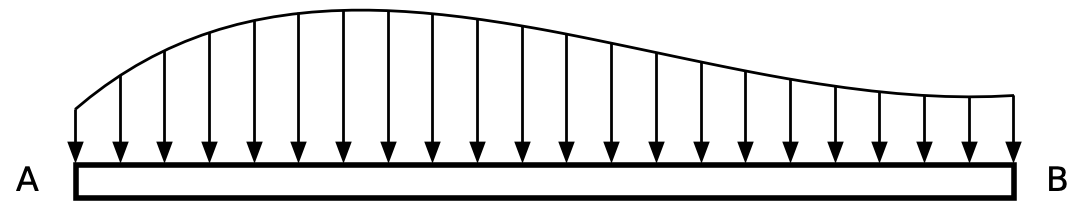

To get the deflection of the beam, we construct a conjugate beam that’s loaded by the diagram of the real beam. Calculating the moment at a point of the conjugate beam gives us the deflection at the corresponding point in the real beam.

For our problem, the conjugate beam looks like this:

The supports of the conjugate beam don’t always match the supports for the real beam, but they do for simple supports, so that makes things easy. The intensity of the distributed load at the peak of the parabola is .

To get the bending moment at the center of the conjugate beam, we analyze a free-body diagram of its left half:

By symmetry, the reaction at the left support is (that’s the area under the parabola, and we’ve done that calculation before). That’s also the resultant of the distributed load. The line of action of the resultant is ⅜ of the way from the center to the left support. Therefore, the moment at the center of the conjugate beam is

so this is the center deflection of the real beam, as expected.