Saving us from ourselves?

March 26, 2026 at 4:06 PM by Dr. Drang

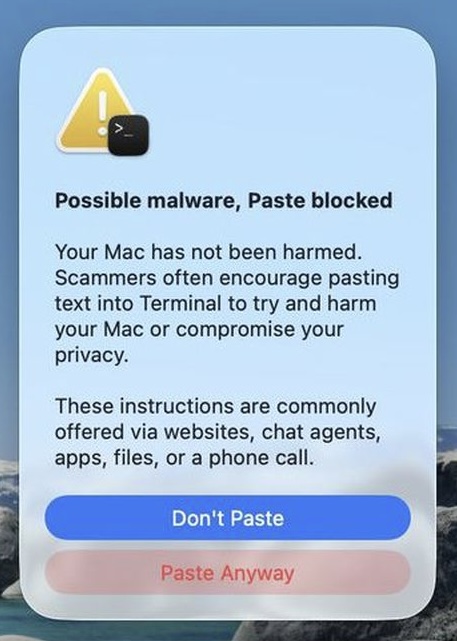

You may have seen this article by Joe Rossignol in MacRumors yesterday. It’s about a new feature in macOS 26.4 that’s designed to keep us from pasting dangerous commands in Terminal. If a user pastes such a command, this warning pops up:

Near the end of the article, Rossignol says

We have yet to determine exactly which commands trigger the warning, which does not always appear.

I updated to 26.4 today and tried to trigger the warning. Since rm -rf is generally considered dangerous, I created some files I could try it out on.

Here are the commands I used in Terminal to create five new files, named test1.txt through test5.txt, in a folder named test and fill them with some nonsense text:

$ mkdir test

$ touch test/test{1..5}.txt

$ pbcopy <<END

> Same continent also, pre-occupation has probably played a more marked

influence; thus I can understand how it may be said, to be striving to the

feral animals of South America) the black bear was seen by Hearne swimming

for hours with widely open through an internal force beyond the other

completed cells. It must suffice for our profound ignorance on the variations

which have been.

> END

$ for f in test/*; do pbpaste >> $f; done

The nonsense text in the here-document was generated on the fly using my Dissociated Darwin script. I used to call it via TextExpander; now it’s called via Typinator, but the process is the same.

I then created a simple HTML file with the command rm -rf test in its body and uploaded it to my server. Since websites were mentioned in the warning as a source of dangerous commands, I figured copying the command from an external site would be the most likely way to trigger the warning.

I aimed Safari at the file’s URL, copied the command, switched back to Terminal, and pasted. No warning popped up, and I was able to execute the pasted command and delete the folder and files with no trouble.

OK, let’s try something a little more convoluted. After recreating the test folder and files, I made a file named dangerous.txt and put it on my server. Its contents were simply rm -rf test. I then added this command to the HTML file described above:

curl https://leancrew.com/dangerous/dangerous.txt | bash

Surely, I thought, a command that pipes the contents of some random file on the internet into bash for execution would be worth warning about. Nope. I copied the curl command from Safari, pasted it into Terminal, and hit Return. No warning from macOS and my test folder and files disappeared again.

My feelings about this have gone from “I hope Apple doesn’t make it impossible for me to work the way I normally do” to “Looks like Apple isn’t going overboard on the protection” to “Is there any protection here at all?”

Maybe there’s some setting on my system I changed long ago that’s now bypassing the warning. If so, I’d like to know what it is. Or maybe I just haven’t hit a high enough danger level yet. Which seems wrong.

Hardware vs. software

March 19, 2026 at 1:28 PM by Dr. Drang

I’ve been thinking about the new Apple Studio Display lately, mainly in the context of Jason Snell’s review of it on Six Colors and his further discussion with Myke Hurley on Upgrade.

Jason’s primary complaint about the Studio Display isn’t its high price or how little it’s improved over the 2022 version. It’s that the base stand—the one you get for $1600—has no height adjustment. To get the ergonomic benefit of height adjustment, you need to buy either the VESA mount adapter (and an arm if you don’t already have one) or the exquisite height-adjustable stand, which is a $400 addition.

It’s hard to argue with Jason when he says this:

Apple claims it’s a champion of accessibility. But in my opinion, part of accessibility is ergonomics. Different people need displays at different heights, and we are all shaped differently. Apple’s continued insistence on shipping displays and iMacs that aren’t height-adjustable by default is frustrating. You spend all this money on a pricey Apple display and then, what, put it on an old dictionary? Meanwhile, even the cut-rate competition offers height adjustments.

The “old dictionary” comment reminded me that in the days of CRT monitors, I—and many of the people I worked with—used the CRC Handbook of Chemistry and Physics to bring our monitors up to the proper height. We all agreed it was the best use we’d ever gotten out of that book.

And that experience my friends and I had with the CRC Handbook points to an important fact about monitor height adjustment: for most people, it’s a set-it-and-forget-it feature. You get your monitor to a height that works well with your desk setup, and you don’t change it for ages. The smooth fingertip control Apple provides with its upgraded stand—while undoubtedly useful for some—isn’t the kind of ergonomic help that most customers need.

What Apple’s doing here is the opposite of what it does on the software side. Most Apple apps are built to be good enough for the great bulk of its users. People who need more—in a calendar, a mail client, a to-do manager, a spreadsheet, a word processor, and so on—go to third-party apps to get those extra features.

This has served both Apple and its customers well. Even those of us who consider ourselves power users aren’t power users across the whole gamut of computing. We use a mix of basic and advanced apps to match our needs. Apple doesn’t have to stay on the cutting edge of all its many software products, and we get free apps that handle a lot of what we do.

Maybe Apple thinks its basic stand has been designed to hit that sweet spot for the vast majority of its users. Maybe they’ve spent considerable time and money on studies of desks and chairs and torsos and have come up with a single monitor height that’s “just fine.” I hope not, because that money would’ve been better spent on a simpler and less beautiful height-adjustable stand.

A leap year inequality

March 15, 2026 at 6:22 PM by Dr. Drang

I’ve been working my way through the fourth edition of Reingold and Dershowitz’s Calendrical Calculations, and I want to talk about something I learned.

It’s a simple inequality that initially appears in the first chapter of the book and gets used several times thereafter. Here it is:

It’s first presented as a way to figure out how leap years are distributed. In some calendars—not the Gregorian—there’s a repeating cycle of years in which years are leap years. If the leap years are distributed as evenly as possible, then the years in which satisfies the inequality are the leap years. The is a sort of offset that determines the position within the cycle associated with Year 0, and the operator represents modulo division. In Python, that’s the % operator.

It’s helpful to look at examples. Let’s say we have a 7-year cycle with 2 leap years and 5 normal years in each cycle. The leap years have to be either 3 or 4 years apart. Here’s an example showing three cycles:

1 1 1 1 1 1 1 1 1 1 2 2

Year 1 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1

Type N N N L N N L N N N L N N L N N N L N N L

For this, , , and . You can plug the values into the inequality to show that it’s satisfied for years 4, 7, 11, 14, 18, 21, and so on.

Here’s a similar example. The only difference is the offset. In this case, , and the leap years are years 2, 5, 9, 12, 16, 19, and so on.

1 1 1 1 1 1 1 1 1 1 2 2

Year 1 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1

Type N L N N L N N N L N N L N N N L N N L N N

A practical example of this formula is with the Hebrew calendar. It has a 19-year cycle () with 7 leap years (), and the offset is 11 years (). The current year is 5786, for which

so this is not a leap year. But for next year,

so it is a leap year and will have 13 months instead of 12. You can confirm this on any number of websites; the key is to note that 5787 has both an Adar I and an Adar II.

The Gregorian calendar has a 400-year cycle with 97 leap years, but those leap years are not distributed as evenly as possible, so the formula can’t be used. If it had been used, we’d have leap years typically every fourth year but occasionally every fifth year. Pope Gregory and his people must’ve thought that would be too tricky to deal with.

Surprisingly (to me, anyway), Reingold and Dershowitz do use this formula with the Gregorian calendar, but they use it with months instead of years. Think of the months in the Gregorian calendar as being either short or long. In a year, there are 5 short months and 7 long months, and they’re distributed like this:

1 1 1

1 2 3 4 5 6 7 8 9 0 1 2

Month J F M A M J J A S O N D

Length L S L S L S L L S L S L

The positions of the long months correspond to our inequality with , , and . Plug in those values, and you’ll see that the long months are 1, 3, 5, 7, 8, 10, and 12.

To calculate the day number within a year, it’s usually easiest to calculate the number of days in the preceding months and then add the day number within the current month. Today is March 15, so it’s Day of the year.

Instead of looping through the lengths of the preceding months, R&D use a formula based on our inequality to count the number of long months before the current month. That formula is

where the brackets without tops represent the floor function, i.e., the integer equal to or just below what’s inside the brackets.

Plugging in our values for , , and and doing some algebra, we get

This is the number of long months in the year before the current month .

If February had 30 days, the number of days in the months before the current month would be

So to get the (Gregorian) day of the year, R&D calculate the day number as if February had 30 days and then subtract (if necessary) to account for February’s deficiency. In Python, the code looks like this:

python:

def day_of_year(year, month, day):

year_day = (367 * month - 362) // 12 + day

if month <= 2:

return year_day

elif leap_year(year):

return year_day - 1

else:

return year_day - 2

where I’m assuming we already have a Boolean function leap_year to determine whether it’s a leap year or not. That’s not necessarily the most obvious code in the world, but it makes sense if you’ve gone through the derivation.

One last thing. Reingold is the co-author of a paper in which our inequality is connected to Euclid’s algorithm for calculating the greatest common divisor and Bresenham’s algorithm for plotting lines on bitmaps. Which is pretty cool.

Scientific American and Friday the 13th

March 13, 2026 at 6:48 PM by Dr. Drang

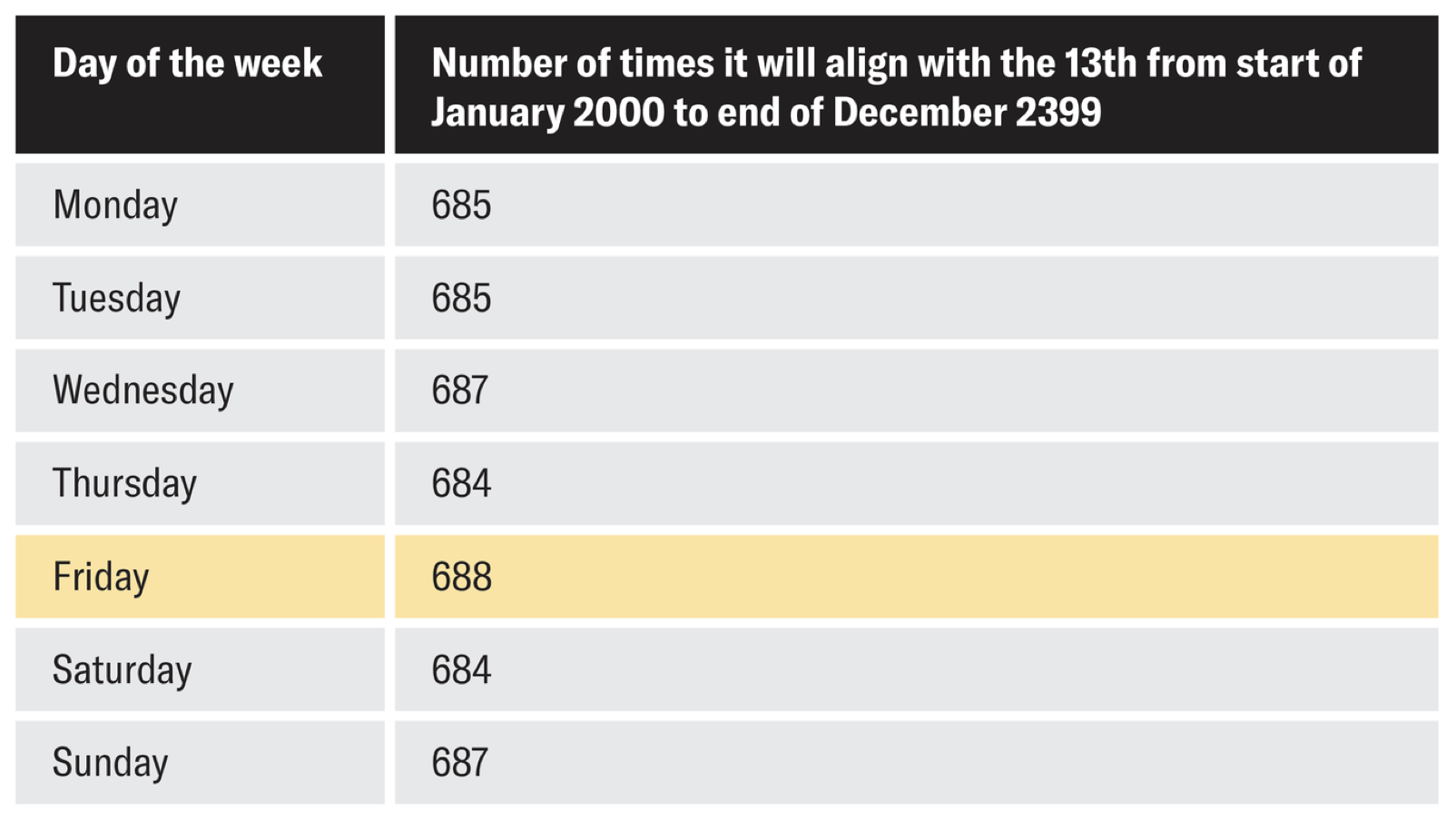

Scientific American has a fun little article today about the frequency of Friday the 13ths. It ends with this table,

and this true but overstated conclusion:

In other words, the 13th of a month will be a Friday more times than any other day of the week.

Well, yes, if you live to be 400 years old, you’ll see one more Friday the 13th than Wednesday the 13ths or Sunday the 13ths. Kind of a weird thing to focus on, though. I’m guessing you’ll have other worries by then.

But I shouldn’t be so snarky. A few years ago, I wrote a post that calculated the same set of Fri13 counts for a 400-year Gregorian cycle. I did the calculations in Mathematica and (of course) showed the code. Today, I did the same thing in Python,

python:

1: #!/usr/bin/env python

2:

3: from datetime import date

4:

5: f13s = [0]*7

6: for y in range(1800, 2200):

7: for m in range(1, 13):

8: wd = date(y, m, 13).weekday()

9: f13s[wd] += 1

10:

11: print(f13s)

and got a result of

[685, 685, 687, 684, 688, 684, 687]

for Monday through Sunday. This also matches the SciAm table.

Those of us who are alive now (and have realistic longevities) won’t live through any non-leap century years. For us, the calendar has and will repeat every 28 years (1461 weeks), and over every 28-year period in our lives, there will be 48 Fri13s, the same as the number of Mon13s, Tue13s, Wed13s, and so on.

Of course, few of us live exactly a multiple of 28 years. Personally, I’ve lived through 113 Fri13s so far, which is just under the number of Sun13s I’ve seen (114). So I’ve been lucky?

In a Friday the 13th post from way back in 2012, I talked about how Fri13s repeat within years because the number of days in certain month sequences is a multiple of 7. So if there’s a Fri13 in April, there will be another in July because

Apr + May + Jun

30 + 31 + 30 = 91

which is 13 weeks. The last time that happened was in 2018.

Similarly, if there’s a Fri13 in September, there will also be one in December because

Sep + Oct + Nov

30 + 31 + 30 = 91

That pair of Fri13s last happened in 2024.

There’s also an 8-month sequence that adds to a multiple of 7:

Mar + Apr + May + Jun + Jul + Aug + Sep + Oct

31 + 30 + 31 + 30 + 31 + 31 + 30 + 31 = 245

So there will be another Fri13 in November of this year.

The sequences above happen every year. In non-leap years only—this year, for example—a Fri13 in February will be followed by one in March. In leap years only, a Fri13 in January will be followed by one in April. That last happened in 2012.

I covered all these repeated Fri13s in that 2012 post. Today, I learned of a new repeat that spans certain year boundaries. If there’s a Fri13 in December of a non-leap year that’s followed by a leap year, there will be a Fri13 in March of that following year. That last happened in December of 2019 and March of 2020.

Superstitious or not, you have to admit March of 2020 was pretty unlucky.