Data cleaning without boredom

October 9, 2021 at 10:37 PM by Dr. Drang

The worst part of data analysis is organizing the data into an analyzable form. You want to get on with the real work of analysis, but first you have to collect and massage the data into a shape that your analysis tools can deal with. Often, this takes more time than the analysis itself. Even when it doesn’t take more time, it feels like it does because you have the sense that nothing important is getting done. I’m an analyst, dammit, I shouldn’t be stuck making sure that tabs and commas are in the right place.

But an unorganized pile of numbers is useless, and wishing it was well-ordered won’t make it so. When I have to organize disparate sets of data, I treat the process as an independent intellectual challenge, something I can be proud of in its own right. This keeps me interested and makes the time go faster. For me, the key is to figure out a way to do as little organization “by hand” as I can.

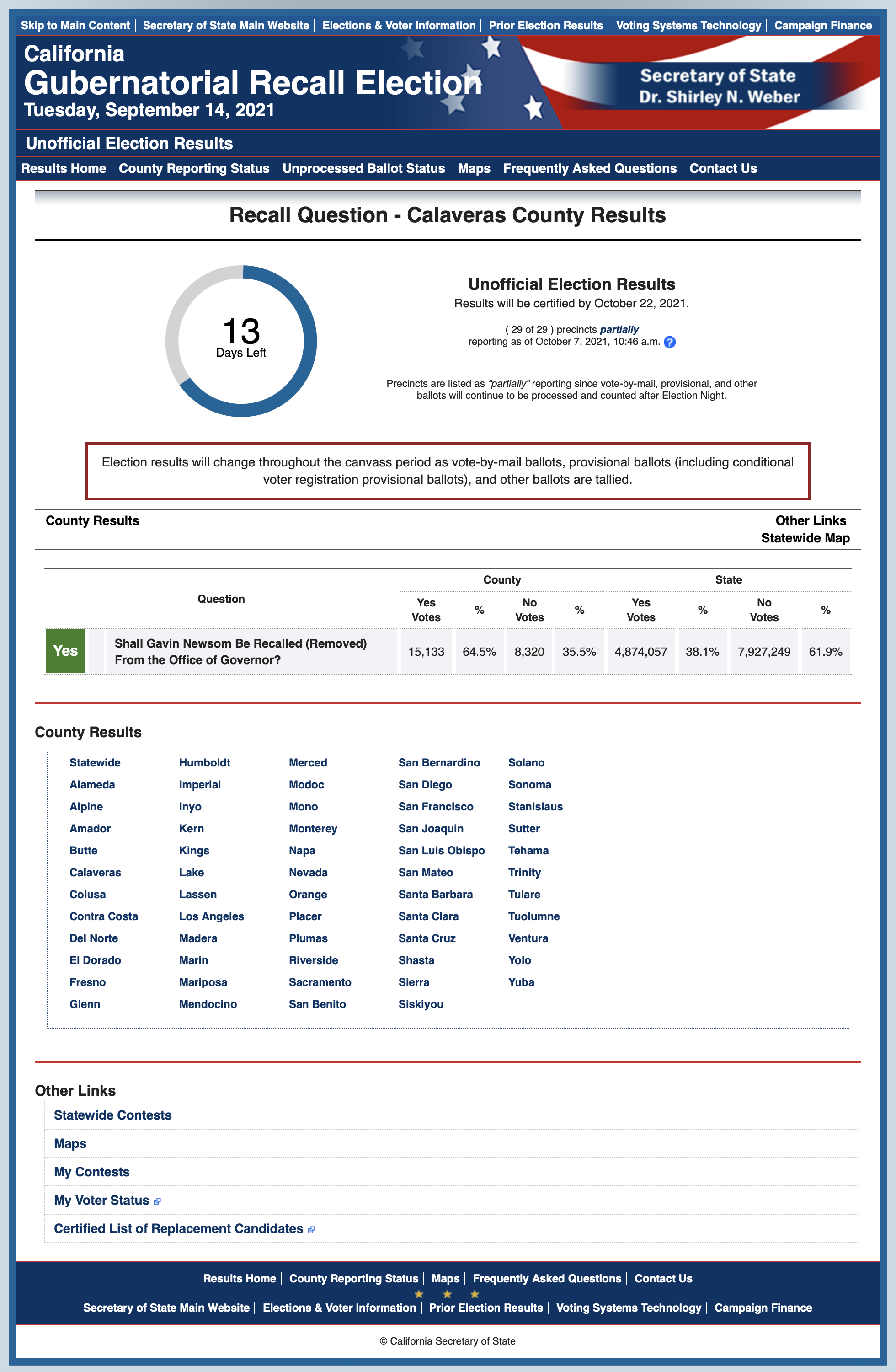

For the recall election post I wrote on Monday, the biggest problem was collecting the voting results from the California Secretary of State’s office. As far as I could tell, the SoS has published no single table of votes organized by county. Instead, there are 58 individual web pages that look like this:

Certainly, we can copy the Yes and No votes from this page and paste them into a spreadsheet or text file, but that process would have to be done 58 times. There’s no way I can do that without making several mistakes as I try to speed through it. So the challenge is in automating the process to avoid boredom-induced blunders.

First, I saw that the Calaveras County page shown above has this URL:

https://electionresults.sos.ca.gov/returns/governor-recall/county/calaveras

It’s a longish prefix followed by the lower-cased name of the county. A quick check on counties with multi-word names—of which California has a lot—showed that those URLs use dashes to separated the names, like

https://electionresults.sos.ca.gov/returns/governor-recall/county/santa-barbara

So if I have a list of the county names, I can use that list to construct the URL for each county in turn. A Google search turned up this page at Ballotpedia. I copied the list and pasted it into a text document named county-names.txt. There was one extra entry in the Ballotpedia list, “Los Angeles County not covered,” which I took to be all of Los Angeles County outside of the city. I deleted that line from the file.

Now that I can automate the generation of URLs, I need to be able to extract the Yes and No votes from an individual county’s page. Scanning through the HTML source of the Calaveras page, I found the code for the vote table:

<table class="propTblCounty stateCountyResultsTbl">

<thead>

<tr class="crsTblHdrTop">

<th colspan="3" rowspan="2">Question</th>

<th colspan="4">County</th>

<th colspan="4">State</th>

</tr>

<tr class="crsTblHdrTop">

<th>Yes<br />Votes</th>

<th>%</th>

<th>No<br />Votes</th>

<th>%</th>

<th>Yes<br />Votes</th>

<th>%</th>

<th>No<br />Votes</th>

<th>%</th>

</tr>

</thead>

<tbody>

<tr class="evenRow resultsTblBorder">

<td class="propYes" style="font-size:1.2em">Yes</td>

<td class="bold"></td>

<td class="bold">Shall Gavin Newsom</span> Be Recalled (Removed) From the Office of Governor?</td>

<td class="votesProp">15,133</td>

<td class="percProp">64.5%</td>

<td class="votesProp">8,320</td>

<td class="percProp">35.5%</td>

<td class="votesProp">4,874,057</td>

<td class="percProp">38.1%</td>

<td class="votesProp">7,927,249</td>

<td class="percProp">61.9%</td>

</tr>

</tbody>

</table>

A search through the full HTML source told me that the stateCountyResultsTbl class uniquely identifies the table of interest. And within the body of that table, the first two data cells with a votesProp class will yield the Yes and No votes for the county.

There are lots of ways to extract data from HTML. Because I’m most comfortable programming in Python, I chose to use it and the Beautiful Soup module, which I’ve used often, to get the Yes and No votes.

In the time it took me to drink a couple of cups of tea, I had the following script, collect-votes.py, written.

python:

1: #!/usr/bin/env python

2:

3: import requests

4: from bs4 import BeautifulSoup

5:

6: urlPrefix = 'https://electionresults.sos.ca.gov/returns/governor-recall/county/'

7:

8: print('County,Yes,No')

9:

10: # Loop through the counties, collecting their Yes and No votes

11: with open('county-names.txt') as f:

12: for line in f:

13: # Construct the URL and parse the HTML from it.

14: name = line.rstrip()

15: url = urlPrefix + name.lower().replace(' ', '-')

16: r = requests.get(url)

17: soup = BeautifulSoup(r.text, 'html.parser')

18:

19: # The table we want is the first (and only)

20: # of class stateCountyResultsTbl

21: voteTable = soup.find('table', {"class": "stateCountyResultsTbl"})

22:

23: # The Yes and No votes are the first two cells of class votesProp

24: # in the body of the table

25: votes = voteTable.tbody.find_all('td', {'class': 'votesProp'})[:2]

26:

27: # Strip out any thousand separators

28: votes = [ x.text.replace(',', '') for x in votes ]

29: outLine = ','.join([name] + votes)

30: print(outLine)

This script goes through the lines of county-names.txt and for each county

- Generates the URL (Line 15).

- Gets the HTML source using the Requests module (Line 16).

- Finds the vote table (Line 21).

- Finds the Yes and No votes (Line 25).

- Strips the thousands separators from the votes (Line 28).

- Prints a CSV-style line for the county (Lines 29-30).

A lot of Python programmers would say I should have used the csv module to write out the data. If output were more complicated, I would have done so, but this seemed simple enough to do with just the print function.

(You might think I stripped out the thousands separators simply so I could avoid having commas within the fields of my CSV output. No, that was just a nice side benefit. I stripped the thousands separators to make the numbers in the CSV file easy to parse. Although lots of systems can handle numbers with commas, every system can handle numbers without commas. This is in keeping with Postel’s Law: Be conservative in what you do, be liberal in what you accept from others.)

Running the script via

python collect-votes.py > alphabetical-votes.csv

gave me a CSV data file that looked like this:

County,Yes,No

Alameda,108081,465901

Alpine,225,354

Amador,12895,6957

Butte,43129,36128

Calaveras,15133,8320

Colusa,3977,1996

Contra Costa,130058,324747

Del Norte,5243,3505

El Dorado,58393,39907

Fresno,132691,125710

etc.

Clean data makes the analysis simple. I could have opened the CSV file in a spreadsheet and done all the analysis there, but I prefer using Pandas, even when it’s overkill, because it keeps my Pandas skills fresh. The script that generated the ordered data table in my earlier post was this:

python:

1: #!/usr/bin/env python

2:

3: import pandas as pd

4:

5: df = pd.read_csv('alphabetical-votes.csv')

6: df['Margin'] = df.Yes - df.No

7: df.sort_values('Margin', ascending=False, inplace=True, ignore_index=True)

8: df['CMargin'] = df.Margin.cumsum()

9: df.index += 1

10: df.to_csv('ordered-votes.csv')

The only tricky thing here is Line 9. Pandas, like Python itself, uses zero-based indexing for the rows of a data frame. I wanted one-based indexing for the table in my post because most people like lists to start with one. The output ordered-votes.csv file looks like this:

,County,Yes,No,Margin,CMargin

1,Kern,126999,78477,48522,48522

2,Placer,114643,85302,29341,77863

3,Shasta,49141,21655,27486,105349

4,Tulare,64372,41009,23363,128712

5,El Dorado,58393,39907,18486,147198

6,Stanislaus,82911,69247,13664,160862

7,Tehama,15958,6186,9772,170634

8,Madera,25638,16233,9405,180039

9,Sutter,20458,11593,8865,188904

10,Kings,19710,11242,8468,197372

etc.

Long ago, I wrote an AppleScript that converts a table in Numbers to (Multi)Markdown format and pastes it into BBEdit. So I opened ordered-votes.csv in Numbers, turned on the thousands separators in the numeric columns, and ran the conversion script to get this:

| | County | Yes | No | Margin | CMargin |

|---:|:---|---:|---:|---:|---:|

| 1 | Kern | 126,999 | 78,477 | 48,522 | 48,522 |

| 2 | Placer | 114,643 | 85,302 | 29,341 | 77,863 |

| 3 | Shasta | 49,141 | 21,655 | 27,486 | 105,349 |

| 4 | Tulare | 64,372 | 41,009 | 23,363 | 128,712 |

| 5 | El Dorado | 58,393 | 39,907 | 18,486 | 147,198 |

| 6 | Stanislaus | 82,911 | 69,247 | 13,664 | 160,862 |

| 7 | Tehama | 15,958 | 6,186 | 9,772 | 170,634 |

| 8 | Madera | 25,638 | 16,233 | 9,405 | 180,039 |

| 9 | Sutter | 20,458 | 11,593 | 8,865 | 188,904 |

| 10 | Kings | 19,710 | 11,242 | 8,468 | 197,372 |

etc.

Another script normalized the table to get all the cells aligned.

| | County | Yes | No | Margin | CMargin |

|---:|:-----------|--------:|-------:|-------:|--------:|

| 1 | Kern | 126,999 | 78,477 | 48,522 | 48,522 |

| 2 | Placer | 114,643 | 85,302 | 29,341 | 77,863 |

| 3 | Shasta | 49,141 | 21,655 | 27,486 | 105,349 |

| 4 | Tulare | 64,372 | 41,009 | 23,363 | 128,712 |

| 5 | El Dorado | 58,393 | 39,907 | 18,486 | 147,198 |

| 6 | Stanislaus | 82,911 | 69,247 | 13,664 | 160,862 |

| 7 | Tehama | 15,958 | 6,186 | 9,772 | 170,634 |

| 8 | Madera | 25,638 | 16,233 | 9,405 | 180,039 |

| 9 | Sutter | 20,458 | 11,593 | 8,865 | 188,904 |

| 10 | Kings | 19,710 | 11,242 | 8,468 | 197,372 |

etc.

This isn’t strictly necessary—the HTML generated from the Markdown will be same regardless of the alignment—but it looks nicer as I’m writing the post. By the way, since my earlier post, the numbers have changed a bit. You can now take a subset of 46 counties and get Yes votes to outnumber the No votes:

| County | Yes | No | Margin | CMargin | |

|---|---|---|---|---|---|

| 1 | Kern | 126,999 | 78,477 | 48,522 | 48,522 |

| 2 | Placer | 114,643 | 85,302 | 29,341 | 77,863 |

| 3 | Shasta | 49,141 | 21,655 | 27,486 | 105,349 |

| 4 | Tulare | 64,372 | 41,009 | 23,363 | 128,712 |

| 5 | El Dorado | 58,393 | 39,907 | 18,486 | 147,198 |

| 6 | Stanislaus | 82,911 | 69,247 | 13,664 | 160,862 |

| 7 | Tehama | 15,958 | 6,186 | 9,772 | 170,634 |

| 8 | Madera | 25,638 | 16,233 | 9,405 | 180,039 |

| 9 | Sutter | 20,458 | 11,593 | 8,865 | 188,904 |

| 10 | Kings | 19,710 | 11,242 | 8,468 | 197,372 |

| 11 | Yuba | 15,291 | 7,961 | 7,330 | 204,702 |

| 12 | Riverside | 362,958 | 355,630 | 7,328 | 212,030 |

| 13 | Butte | 43,129 | 36,128 | 7,001 | 219,031 |

| 14 | Fresno | 132,691 | 125,710 | 6,981 | 226,012 |

| 15 | Lassen | 8,538 | 1,604 | 6,934 | 232,946 |

| 16 | Calaveras | 15,133 | 8,320 | 6,813 | 239,759 |

| 17 | Tuolumne | 15,832 | 9,850 | 5,982 | 245,741 |

| 18 | Amador | 12,895 | 6,957 | 5,938 | 251,679 |

| 19 | Siskiyou | 11,282 | 6,951 | 4,331 | 256,010 |

| 20 | Glenn | 6,317 | 2,479 | 3,838 | 259,848 |

| 21 | Plumas | 5,837 | 3,407 | 2,430 | 262,278 |

| 22 | Merced | 30,210 | 27,867 | 2,343 | 264,621 |

| 23 | Mariposa | 5,378 | 3,376 | 2,002 | 266,623 |

| 24 | Colusa | 3,977 | 1,996 | 1,981 | 268,604 |

| 25 | Modoc | 2,508 | 706 | 1,802 | 270,406 |

| 26 | Del Norte | 5,243 | 3,505 | 1,738 | 272,144 |

| 27 | Inyo | 4,128 | 3,496 | 632 | 272,776 |

| 28 | Trinity | 2,699 | 2,106 | 593 | 273,369 |

| 29 | Sierra | 1,064 | 616 | 448 | 273,817 |

| 30 | Alpine | 225 | 354 | -129 | 273,688 |

| 31 | Mono | 2,245 | 2,805 | -560 | 273,128 |

| 32 | Lake | 3,728 | 5,605 | -1,877 | 271,251 |

| 33 | San Bernardino | 285,650 | 288,291 | -2,641 | 268,610 |

| 34 | San Benito | 9,181 | 12,595 | -3,414 | 265,196 |

| 35 | Nevada | 25,426 | 29,851 | -4,425 | 260,771 |

| 36 | Imperial | 12,193 | 18,210 | -6,017 | 254,754 |

| 37 | San Luis Obispo | 61,148 | 68,365 | -7,217 | 247,537 |

| 38 | Mendocino | 11,870 | 21,852 | -9,982 | 237,555 |

| 39 | San Joaquin | 94,877 | 105,405 | -10,528 | 227,027 |

| 40 | Humboldt | 18,179 | 32,585 | -14,406 | 212,621 |

| 41 | Napa | 18,681 | 38,948 | -20,267 | 192,354 |

| 42 | Yolo | 24,769 | 52,444 | -27,675 | 164,679 |

| 43 | Santa Barbara | 57,355 | 92,905 | -35,550 | 129,129 |

| 44 | Orange | 547,685 | 586,457 | -38,772 | 90,357 |

| 45 | Solano | 58,372 | 97,935 | -39,563 | 50,794 |

| 46 | Monterey | 38,169 | 80,664 | -42,495 | 8,299 |

| 47 | Ventura | 136,610 | 182,470 | -45,860 | -37,561 |

| 48 | Santa Cruz | 25,454 | 90,874 | -65,420 | -102,981 |

| 49 | Marin | 24,273 | 108,599 | -84,326 | -187,307 |

| 50 | Sonoma | 57,396 | 160,550 | -103,154 | -290,461 |

| 51 | Sacramento | 220,498 | 329,952 | -109,454 | -399,915 |

| 52 | San Mateo | 64,329 | 227,174 | -162,845 | -562,760 |

| 53 | San Diego | 504,915 | 674,417 | -169,502 | -732,262 |

| 54 | Contra Costa | 130,058 | 324,747 | -194,689 | -926,951 |

| 55 | San Francisco | 47,193 | 292,744 | -245,551 | -1,172,502 |

| 56 | Santa Clara | 166,930 | 468,680 | -301,750 | -1,474,252 |

| 57 | Alameda | 108,081 | 465,901 | -357,820 | -1,832,072 |

| 58 | Los Angeles | 855,234 | 2,076,354 | -1,221,120 | -3,053,192 |

The overall change in votes makes Newsom’s winning margin only about 3500 votes lower than it was last Monday, but the way the pluses and minuses were distributed increased our worthless count of counties by one.

This, by the way, demonstrates another advantage of automating the data collection and organization. In addition to keeping me interested, it also makes it easy to rerun the analysis if the data change. When the vote-counting is finalized later in the month, I can check the numbers again by just running a couple of scripts.